Added Magic Prompt by Gustavosta

parent

557e4c969a

commit

5358234f55

31

README.md

31

README.md

|

|

@ -1,25 +1,44 @@

|

|||

# Prompt Generator

|

||||

|

||||

Adds a tab to the webui that allows the user to generate a prompt from a small base prompt. Based on [FredZhang7/distilgpt2-stable-diffusion-v2](https://huggingface.co/FredZhang7/distilgpt2-stable-diffusion-v2). I did nothing apart from porting it to [AUTOMATIC1111 WebUI](https://github.com/AUTOMATIC1111/stable-diffusion-webui)

|

||||

Adds a tab to the webui that allows the user to generate a prompt from a small base prompt. Based on [FredZhang7/distilgpt2-stable-diffusion-v2](https://huggingface.co/FredZhang7/distilgpt2-stable-diffusion-v2) and [Gustavosta/MagicPrompt-Stable-Diffusion](https://huggingface.co/Gustavosta/MagicPrompt-Stable-Diffusion). I did nothing apart from porting it to [AUTOMATIC1111 WebUI](https://github.com/AUTOMATIC1111/stable-diffusion-webui)

|

||||

|

||||

|

||||

|

||||

|

||||

## Installation:

|

||||

## Installation

|

||||

|

||||

1. Install [AUTOMATIC1111's Stable Diffusion Webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui)

|

||||

2. Clone this repository into the `extensions` folder inside the webui

|

||||

|

||||

## Usage:

|

||||

## Usage

|

||||

|

||||

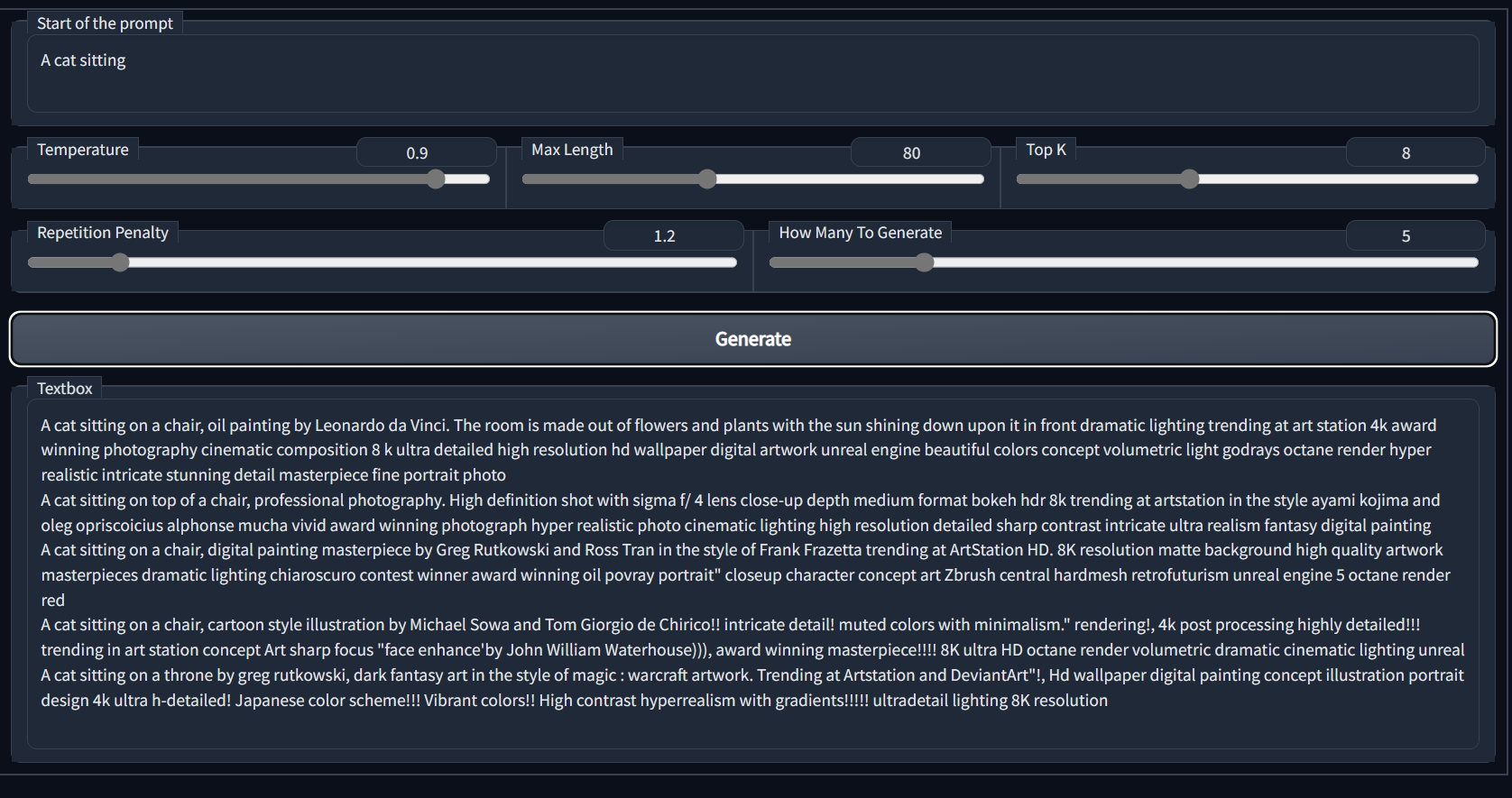

1. Write in the prompt in the *Start of the prompt* text box

|

||||

2. Click Generate and wait

|

||||

|

||||

## Parameters Explanation

|

||||

## Parameters Explanation

|

||||

|

||||

- **Start of the prompt**: As the name, the start of the prompt that the generator should start with

|

||||

- **Temperature**: A higher temperature will produce more diverse results, but with a higher risk of less coherent text

|

||||

- **Top K**: The number of tokens to sample from at each step

|

||||

- **Top K**: Strategy is to sample from a shortlist of the top K tokens. This approach allows the other high-scoring tokens a chance of being picked.

|

||||

- **Max Length**: the maximum number of tokens for the output of the model

|

||||

- **Repetition Penalty**: The penalty value for each repetition of a token

|

||||

- **Repetition Penalty**: The parameter for repetition penalty. 1.0 means no penalty. See [this paper](https://arxiv.org/pdf/1909.05858.pdf) for more details. Default setting is 1.2

|

||||

- **How Many To Generate**: The number of results to generate

|

||||

- **Use blacklist?** Using `.\extensions\stable-diffusion-webui-Prompt_Generator\blacklist.txt`. It will delete any matches to the generated result (case insensitive). Each item to be filtered out should be on a new line. *Be aware that it simply deletes it and doesn't generate more to make up for the lost words*

|

||||

|

||||

## Models

|

||||

|

||||

There are two models provided:

|

||||

|

||||

### FredZhang7

|

||||

|

||||

Made by [FredZhang7](https://huggingface.co/FredZhang7) under creativeml-openrail-m license. Useful to get styles for a prompt. Eg: "A cat sitting" -> "A cat sitting on a chair, digital art. The room is made of clay and metal with the sun shining through in front trending at Artstation 4k uhd..."

|

||||

|

||||

### MagicPrompt

|

||||

|

||||

Made by [Gustavosta](https://huggingface.co/Gustavosta) under the MIT license. Useful to get more natural language prompts. Eg: "A cat sitting" -> "A cat sitting in a chair, wearing pair of sunglasses"

|

||||

|

||||

*Be aware that sometimes the model fails to produce anything or less than the wanted amount, either try again or use a new prompt in that case*

|

||||

|

||||

## Credits

|

||||

|

||||

Credits to both [FredZhang7](https://huggingface.co/FredZhang7) and [Gustavosta](https://huggingface.co/Gustavosta)

|

||||

|

|

@ -0,0 +1,21 @@

|

|||

MIT License

|

||||

|

||||

Copyright (c) 2023 imrayya

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

|

|

@ -1,8 +1,19 @@

|

|||

"""

|

||||

Copyright 2023 Imrayya

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

|

||||

"""

|

||||

|

||||

|

||||

import gradio as gr

|

||||

import modules

|

||||

from modules import script_callbacks

|

||||

from transformers import GPT2Tokenizer, GPT2LMHeadModel

|

||||

import os

|

||||

import re

|

||||

|

||||

result_prompt = ""

|

||||

|

|

@ -32,12 +43,74 @@ def get_list_blacklist():

|

|||

|

||||

def on_ui_tabs():

|

||||

# Method to create the extended prompt

|

||||

|

||||

|

||||

def generate_longer_prompt_gustavosta(prompt, temperature, top_k,

|

||||

max_length, repetition_penalty, num_return_sequences, use_blacklist=False, use_early_stop=True):

|

||||

try:

|

||||

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

|

||||

tokenizer.add_special_tokens({'pad_token': '[PAD]'})

|

||||

#Full credits for the model to Gustavosta (https://huggingface.co/Gustavosta). Under the MIT license

|

||||

model = GPT2LMHeadModel.from_pretrained(

|

||||

'Gustavosta/MagicPrompt-Dalle')

|

||||

except Exception as e:

|

||||

print(f"Exception encountered while attempting to install tokenizer")

|

||||

return gr.update(), f"Error: {e}"

|

||||

try:

|

||||

min = len(prompt)

|

||||

print(f"Generate new prompt from: \"{prompt}\"")

|

||||

input_ids = tokenizer(prompt, return_tensors='pt').input_ids

|

||||

output = model.generate(input_ids, do_sample=True, temperature=temperature,

|

||||

top_k=top_k, max_length=max_length,

|

||||

num_return_sequences=num_return_sequences*4,

|

||||

repetition_penalty=repetition_penalty,

|

||||

penalty_alpha=0.6, no_repeat_ngram_size=1,

|

||||

early_stopping=use_early_stop)

|

||||

print("Generation complete!")

|

||||

tempString = ""

|

||||

if (use_blacklist):

|

||||

blacklist = get_list_blacklist()

|

||||

j = 0

|

||||

for i in range(len(output)):

|

||||

tempt_of_temp_String = tokenizer.decode(

|

||||

output[i], skip_special_tokens=True)

|

||||

# print(tempt_of_temp_String[:-1], j,

|

||||

# len(tempt_of_temp_String) > min + 4) # Debugger

|

||||

if (len(tempt_of_temp_String) > min + 4):

|

||||

tempString += str(j+1) + ": " + tempt_of_temp_String

|

||||

j += 1

|

||||

else:

|

||||

continue

|

||||

if (use_blacklist):

|

||||

for to_check in blacklist:

|

||||

tempString = re.sub(

|

||||

to_check, "", tempString, flags=re.IGNORECASE)

|

||||

if (j == num_return_sequences):

|

||||

break

|

||||

|

||||

def generate_longer_prompt(prompt, temperature, top_k,

|

||||

max_length, repetition_penalty, num_return_sequences, use_blacklist=False):

|

||||

global result_prompt

|

||||

result_prompt = tempString

|

||||

# print(result_prompt)

|

||||

|

||||

return {results: tempString,

|

||||

send_to_img2img: gr.update(visible=True),

|

||||

send_to_txt2img: gr.update(visible=True),

|

||||

send_to_text: gr.update(visible=True),

|

||||

results_col: gr.update(visible=True),

|

||||

warning: gr.update(visible=True),

|

||||

promptNum_col: gr.update(visible=True)

|

||||

}

|

||||

except Exception as e:

|

||||

print(

|

||||

f"Exception encountered while attempting to generate prompt: {e}")

|

||||

return gr.update(), f"Error: {e}"

|

||||

|

||||

def generate_longer_prompt_FredZhang7(prompt, temperature, top_k,

|

||||

max_length, repetition_penalty, num_return_sequences, use_blacklist=False):

|

||||

try:

|

||||

tokenizer = GPT2Tokenizer.from_pretrained('distilgpt2')

|

||||

tokenizer.add_special_tokens({'pad_token': '[PAD]'})

|

||||

#Full credits for the model to FredZhang7 (https://huggingface.co/FredZhang7). Under creativeml-openrail-m license.

|

||||

model = GPT2LMHeadModel.from_pretrained(

|

||||

'FredZhang7/distilgpt2-stable-diffusion-v2')

|

||||

except Exception as e:

|

||||

|

|

@ -50,7 +123,7 @@ def on_ui_tabs():

|

|||

top_k=top_k, max_length=max_length,

|

||||

num_return_sequences=num_return_sequences,

|

||||

repetition_penalty=repetition_penalty,

|

||||

penalty_alpha=0.6, no_repeat_ngram_size=1,

|

||||

penalty_alpha=0.6, no_repeat_ngram_size=1,

|

||||

early_stopping=True)

|

||||

print("Generation complete!")

|

||||

tempString = ""

|

||||

|

|

@ -69,11 +142,12 @@ def on_ui_tabs():

|

|||

global result_prompt

|

||||

|

||||

result_prompt = tempString

|

||||

print(result_prompt)

|

||||

# print(result_prompt)

|

||||

|

||||

return {results: tempString,

|

||||

send_to_img2img: gr.update(visible=True),

|

||||

send_to_txt2img: gr.update(visible=True),

|

||||

send_to_text: gr.update(visible=True),

|

||||

results_col: gr.update(visible=True),

|

||||

warning: gr.update(visible=True),

|

||||

promptNum_col: gr.update(visible=True)

|

||||

|

|

@ -82,9 +156,8 @@ def on_ui_tabs():

|

|||

print(

|

||||

f"Exception encountered while attempting to generate prompt: {e}")

|

||||

return gr.update(), f"Error: {e}"

|

||||

|

||||

|

||||

# structure

|

||||

|

||||

# UI structure

|

||||

txt2img_prompt = modules.ui.txt2img_paste_fields[0][0]

|

||||

img2img_prompt = modules.ui.img2img_paste_fields[0][0]

|

||||

|

||||

|

|

@ -98,7 +171,7 @@ def on_ui_tabs():

|

|||

temp_slider = gr.Slider(

|

||||

elem_id="temp_slider", label="Temperature", interactive=True, minimum=0, maximum=1, value=0.9)

|

||||

max_length_slider = gr.Slider(

|

||||

elem_id="max_length_slider", label="Max Length", interactive=True, minimum=1, maximum=200, step=1, value=80)

|

||||

elem_id="max_length_slider", label="Max Length", interactive=True, minimum=1, maximum=200, step=1, value=90)

|

||||

top_k_slider = gr.Slider(

|

||||

elem_id="top_k_slider", label="Top K", value=8, minimum=1, maximum=20, interactive=True)

|

||||

with gr.Column():

|

||||

|

|

@ -113,8 +186,10 @@ def on_ui_tabs():

|

|||

gr.HTML(value="<center>Using <code>\".\extensions\stable-diffusion-webui-Prompt_Generator\\blacklist.txt</code>\".<br>It will delete any matches to the generated result (case insensitive).</center>")

|

||||

with gr.Column():

|

||||

with gr.Row():

|

||||

generateButton = gr.Button(

|

||||

value="Generate", elem_id="generate_button")

|

||||

generateButton_fred = gr.Button(

|

||||

value="Generate Using FredZhang7", elem_id="generate_button_FredZhang7")

|

||||

generateButton_magic = gr.Button(

|

||||

value="Generate Using Magic Prompt", elem_id="generate_button_MagicPrompt")

|

||||

with gr.Column(visible=False) as results_col:

|

||||

results = gr.Text(

|

||||

label="Results", elem_id="Results_textBox", interactive=False)

|

||||

|

|

@ -128,23 +203,33 @@ def on_ui_tabs():

|

|||

with gr.Row():

|

||||

send_to_txt2img = gr.Button('Send to txt2img', visible=False)

|

||||

send_to_img2img = gr.Button('Send to img2img', visible=False)

|

||||

send_to_text = gr.Button(

|

||||

'Send to back to prompter', visible=False)

|

||||

|

||||

|

||||

# events

|

||||

generateButton.click(fn=generate_longer_prompt, inputs=[

|

||||

promptTxt, temp_slider, top_k_slider, max_length_slider,

|

||||

repetition_penalty_slider, num_return_sequences_slider,

|

||||

use_blacklist_checkbox],

|

||||

outputs=[results, send_to_img2img, send_to_txt2img,

|

||||

results_col, warning, promptNum_col])

|

||||

generateButton_fred.click(fn=generate_longer_prompt_FredZhang7, inputs=[

|

||||

promptTxt, temp_slider, top_k_slider, max_length_slider,

|

||||

repetition_penalty_slider, num_return_sequences_slider,

|

||||

use_blacklist_checkbox],

|

||||

outputs=[results, send_to_img2img, send_to_txt2img, send_to_text,

|

||||

results_col, warning, promptNum_col])

|

||||

generateButton_magic.click(fn=generate_longer_prompt_gustavosta, inputs=[

|

||||

promptTxt, temp_slider, top_k_slider, max_length_slider,

|

||||

repetition_penalty_slider, num_return_sequences_slider,

|

||||

use_blacklist_checkbox],

|

||||

outputs=[results, send_to_img2img, send_to_txt2img, send_to_text,

|

||||

results_col, warning, promptNum_col])

|

||||

send_to_img2img.click(add_to_prompt, inputs=[

|

||||

promptNum], outputs=[img2img_prompt])

|

||||

send_to_txt2img.click(add_to_prompt, inputs=[

|

||||

promptNum], outputs=[txt2img_prompt])

|

||||

send_to_text.click(add_to_prompt, inputs=[

|

||||

promptNum], outputs=[promptTxt])

|

||||

send_to_txt2img.click(None, _js='switch_to_txt2img',

|

||||

inputs=None, outputs=None)

|

||||

send_to_img2img.click(None, _js="switch_to_img2img",

|

||||

inputs=None, outputs=None)

|

||||

return (prompt_generator, "Prompt Generator", "Prompt Generator"),

|

||||

|

||||

|

||||

script_callbacks.on_ui_tabs(on_ui_tabs)

|

||||

|

|

|

|||

Loading…

Reference in New Issue