ver 4

parent

5cb02a1c27

commit

d82b0e871e

25

README.md

25

README.md

|

|

@ -7,23 +7,13 @@

|

||||||

# Recent Update

|

# Recent Update

|

||||||

All updates can be found [here](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md)

|

All updates can be found [here](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md)

|

||||||

|

|

||||||

|

### update 2023.03.02.1900(JST)

|

||||||

|

- Elemental Merge feature added. Details [here](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/elementa_en.md).

|

||||||

|

|

||||||

|

|

||||||

### update 2023.02.20.2000(JST)

|

### update 2023.02.20.2000(JST)

|

||||||

The timing of importing "diffusers" has been changed. With this update, some environments can be started without installing "diffusers".

|

The timing of importing "diffusers" has been changed. With this update, some environments can be started without installing "diffusers".

|

||||||

|

|

||||||

### bug fix 2023.02.19.2330(JST)

|

|

||||||

Several bugs have been fixed

|

|

||||||

- Error when LOWRAM option is enabled

|

|

||||||

- Error on Linux

|

|

||||||

- XY plot did not finish properly

|

|

||||||

- Error when setting unused models

|

|

||||||

|

|

||||||

### update to version 3 2023.02.17.2020(JST)

|

|

||||||

- Added LoRA related functions

|

|

||||||

- Logs can now be saved and settings can be recalled.

|

|

||||||

- Save in safetensors and fp16 format is now supported.

|

|

||||||

- Weight presets are now supported.

|

|

||||||

- Reservation of XY plots is now possible.

|

|

||||||

|

|

||||||

diffusers must now be installed. on windows, this can be done by typing "pip install diffusers" at the command prompt in the web-ui folder, but it depends on your environment.

|

diffusers must now be installed. on windows, this can be done by typing "pip install diffusers" at the command prompt in the web-ui folder, but it depends on your environment.

|

||||||

|

|

||||||

# overview

|

# overview

|

||||||

|

|

@ -91,6 +81,13 @@ BASE,IN00,IN01,IN02,IN03,IN04,IN05,IN06,IN07,IN08,IN09,IN10,IN11,M00,OUT00,OUT01

|

||||||

### Reserve XY plot

|

### Reserve XY plot

|

||||||

The Reserve XY plot button reserves the execution of an XY plot for the setting at the time the button is pressed, instead of immediately executing the plot. The reserved XY plot will be executed after the normal XY plot is completed or by pressing the Start XY plot button on the Reservation tab. Reservations can be made at any time during the execution or non-execution of an XY plot. The reservation list is not automatically updated, so use the Reload button. If an error occurs, the plot is discarded and the next reservation is executed. Images will not be displayed until all reservations are finished, but those that have been marked "Finished" have finished generating the grid and can be viewed in the Image Browser or other applications.

|

The Reserve XY plot button reserves the execution of an XY plot for the setting at the time the button is pressed, instead of immediately executing the plot. The reserved XY plot will be executed after the normal XY plot is completed or by pressing the Start XY plot button on the Reservation tab. Reservations can be made at any time during the execution or non-execution of an XY plot. The reservation list is not automatically updated, so use the Reload button. If an error occurs, the plot is discarded and the next reservation is executed. Images will not be displayed until all reservations are finished, but those that have been marked "Finished" have finished generating the grid and can be viewed in the Image Browser or other applications.

|

||||||

|

|

||||||

|

It is also possible to move to an appointment at any location by using "|".

|

||||||

|

Inputing "0.1,0.2,0.3,0.4,0.5|0.6,0.7,0.8,0.9,1.0"

|

||||||

|

|

||||||

|

0.1,0.2,0.3,0.4,0.5

|

||||||

|

0.6,0.7,0.8,0.9,1.0

|

||||||

|

The grid is divided into two reservations, "0.1,0.2,0.3,0.4,0.5" and "0.6,0.7,0.8,0.9,1.0" executed. This may be useful when there are too many elements and the grid becomes too large.

|

||||||

|

|

||||||

### About Cache

|

### About Cache

|

||||||

By storing models in memory, continuous merging and other operations can be sped up.

|

By storing models in memory, continuous merging and other operations can be sped up.

|

||||||

Cache settings can be configured from web-ui's setting menu.

|

Cache settings can be configured from web-ui's setting menu.

|

||||||

|

|

|

||||||

25

README_ja.md

25

README_ja.md

|

|

@ -6,23 +6,12 @@

|

||||||

すべての更新履歴は[こちら](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md)にあります。

|

すべての更新履歴は[こちら](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md)にあります。

|

||||||

All updates can be found [here](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md).

|

All updates can be found [here](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/changelog.md).

|

||||||

|

|

||||||

|

### update 2023.03.02.1900(JST)

|

||||||

|

- Elementai Merge機能を実装しました。詳細は[こちら](https://github.com/hako-mikan/sd-webui-supermerger/blob/ver2/elemental_ja.md)

|

||||||

|

|

||||||

### update 2023.02.20.2000(JST)

|

### update 2023.02.20.2000(JST)

|

||||||

"diffusers"をインポートするタイミングを変更しました。このアップデートにより、環境によっては"diffusers"のインストールなしに起動できるようになります。

|

"diffusers"をインポートするタイミングを変更しました。このアップデートにより、環境によっては"diffusers"のインストールなしに起動できるようになります。

|

||||||

|

|

||||||

### bug fix 2023.02.19.2330(JST)

|

|

||||||

いくつかのバグが修正されました

|

|

||||||

- LOWRAMオプション有効時にエラーになる問題

|

|

||||||

- Linuxでエラーになる問題

|

|

||||||

- XY plotが正常に終了しない問題

|

|

||||||

- 未ロードのモデルを設定時にエラーになる問題

|

|

||||||

|

|

||||||

### update to version 3 2023.02.17.2020(JST)

|

|

||||||

- LoRA関係の機能を追加しました

|

|

||||||

- Logを保存し、設定を呼び出せるようになりました

|

|

||||||

- safetensors,fp16形式での保存に対応しました

|

|

||||||

- weightのプリセットに対応しました

|

|

||||||

- XYプロットの予約が可能になりました

|

|

||||||

|

|

||||||

diffusersのインストールが必要になりました。windowsの場合はweb-uiのフォルダでコマンドプロンプトから"pip install diffusers"を打つことでインストールできる場合がありますが環境によります。

|

diffusersのインストールが必要になりました。windowsの場合はweb-uiのフォルダでコマンドプロンプトから"pip install diffusers"を打つことでインストールできる場合がありますが環境によります。

|

||||||

#

|

#

|

||||||

|

|

||||||

|

|

@ -89,7 +78,13 @@ IN01,OUT10 OUT11, OUT03-OUT06,OUT07-OUT11,NOT M00 OUT03-OUT06

|

||||||

BASE,IN00,IN01,IN02,IN03,IN04,IN05,IN06,IN07,IN08,IN09,IN10,IN11,M00,OUT00,OUT01,OUT02,OUT03,OUT04,OUT05,OUT06,OUT07,OUT08,OUT09,OUT10,OUT11

|

BASE,IN00,IN01,IN02,IN03,IN04,IN05,IN06,IN07,IN08,IN09,IN10,IN11,M00,OUT00,OUT01,OUT02,OUT03,OUT04,OUT05,OUT06,OUT07,OUT08,OUT09,OUT10,OUT11

|

||||||

|

|

||||||

### XYプロットの予約

|

### XYプロットの予約

|

||||||

Reserve XY plotボタンはすぐさまプロットを実行せず、ボタンを押したときの設定のXYプロットの実行を予約します。予約したXYプロットは通常のXYプロットが終了した後か、ReservationタブのStart XY plotボタンを押すと実行が開始されます。予約はXYプロット実行時・未実行時いつでも可能です。予約一覧は自動更新されないのでリロードボタンを使用してください。エラー発生時はそのプロットを破棄して次の予約を実行します。すべての予約が終了するまで画像は表示されませんが、Finishedになったものについてはグリッドの生成は終わっているので、Image Browser等で見ることが可能です。

|

Reserve XY plotボタンはすぐさまプロットを実行せず、ボタンを押したときの設定のXYプロットの実行を予約します。予約したXYプロットは通常のXYプロットが終了した後か、ReservationタブのStart XY plotボタンを押すと実行が開始されます。予約はXYプロット実行時・未実行時いつでも可能です。予約一覧は自動更新されないのでリロードボタンを使用してください。エラー発生時はそのプロットを破棄して次の予約を実行します。すべての予約が終了するまで画像は表示されませんが、Finishedになったものについてはグリッドの生成は終わっているので、Image Browser等で見ることが可能です。

|

||||||

|

「|」を使用することで任意の場所で予約へ移動することも可能です。

|

||||||

|

0.1,0.2,0.3,0.4,0.5|0.6,0.7,0.8,0.9,1.0とすると

|

||||||

|

|

||||||

|

0.1,0.2,0.3,0.4,0.5

|

||||||

|

0.6,0.7,0.8,0.9,1.0

|

||||||

|

というふたつの予約に分割され実行されます。これは要素が多すぎてグリッドが大きくなってしまう場合などに有効でしょう。

|

||||||

|

|

||||||

### キャッシュについて

|

### キャッシュについて

|

||||||

モデルをメモリ上に保存することにより連続マージなどを高速化することができます。

|

モデルをメモリ上に保存することにより連続マージなどを高速化することができます。

|

||||||

|

|

|

||||||

28

changelog.md

28

changelog.md

|

|

@ -1,4 +1,32 @@

|

||||||

# Changelog

|

# Changelog

|

||||||

|

### bug fix 2023.02.19.2330(JST)

|

||||||

|

いくつかのバグが修正されました

|

||||||

|

- LOWRAMオプション有効時にエラーになる問題

|

||||||

|

- Linuxでエラーになる問題

|

||||||

|

- XY plotが正常に終了しない問題

|

||||||

|

- 未ロードのモデルを設定時にエラーになる問題

|

||||||

|

|

||||||

|

### update to version 3 2023.02.17.2020(JST)

|

||||||

|

- LoRA関係の機能を追加しました

|

||||||

|

- Logを保存し、設定を呼び出せるようになりました

|

||||||

|

- safetensors,fp16形式での保存に対応しました

|

||||||

|

- weightのプリセットに対応しました

|

||||||

|

- XYプロットの予約が可能になりました

|

||||||

|

|

||||||

|

### bug fix 2023.02.19.2330(JST)

|

||||||

|

Several bugs have been fixed

|

||||||

|

- Error when LOWRAM option is enabled

|

||||||

|

- Error on Linux

|

||||||

|

- XY plot did not finish properly

|

||||||

|

- Error when setting unused models

|

||||||

|

|

||||||

|

### update to version 3 2023.02.17.2020(JST)

|

||||||

|

- Added LoRA related functions

|

||||||

|

- Logs can now be saved and settings can be recalled.

|

||||||

|

- Save in safetensors and fp16 format is now supported.

|

||||||

|

- Weight presets are now supported.

|

||||||

|

- Reservation of XY plots is now possible.

|

||||||

|

|

||||||

### bug fix 2023.01.29.0000(JST)

|

### bug fix 2023.01.29.0000(JST)

|

||||||

pinpoint blocksがX方向で使用できない問題を修正しました。

|

pinpoint blocksがX方向で使用できない問題を修正しました。

|

||||||

pinpoint blocks選択時Triple,Twiceを使用できない問題を解決しました

|

pinpoint blocks選択時Triple,Twiceを使用できない問題を解決しました

|

||||||

|

|

|

||||||

|

|

@ -0,0 +1,118 @@

|

||||||

|

# Elemental Merge

|

||||||

|

- This is a block-by-block merge that goes beyond block-by-block merge.

|

||||||

|

|

||||||

|

In a block-by-block merge, the merge ratio can be changed for each of the 25 blocks, but a blocks also consists of multiple elements, and in principle it is possible to change the ratio for each element. It is possible, but the number of elements is more than 600, and it was doubtful whether it could be handled by human hands, but we tried to implement it. I do not recommend merging elements by element out of the blue. It is recommended to use it as a final adjustment when a problem that cannot be solved by block-by-block merging.

|

||||||

|

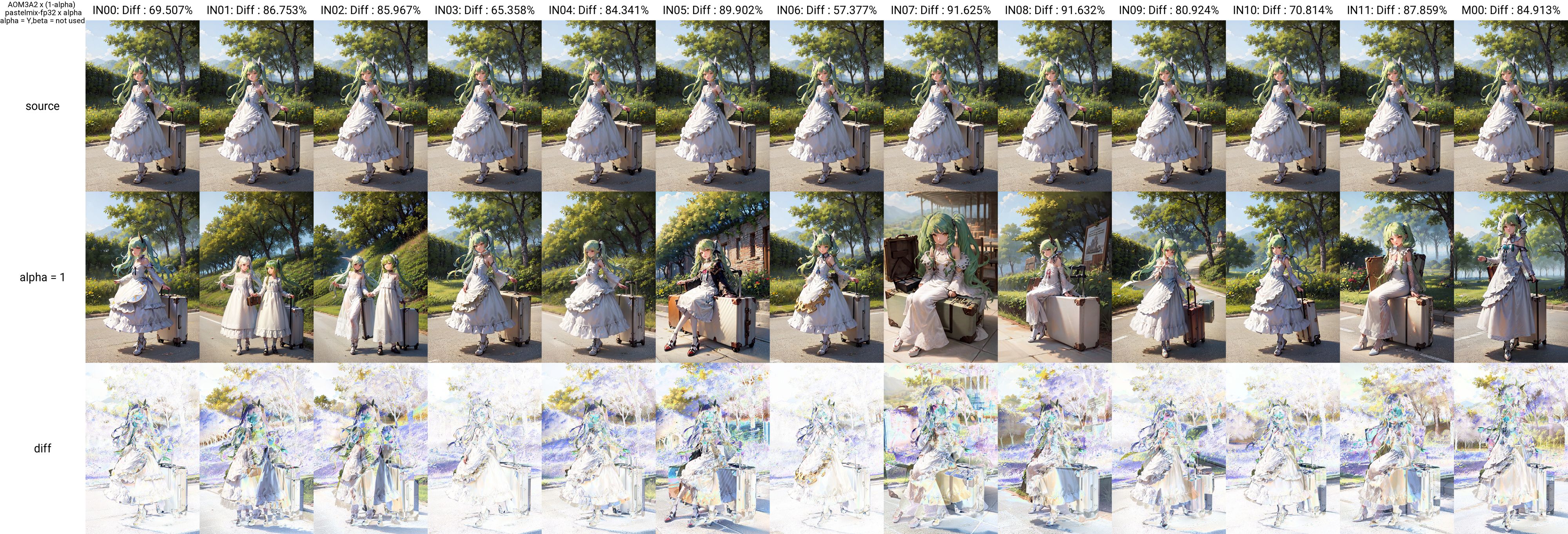

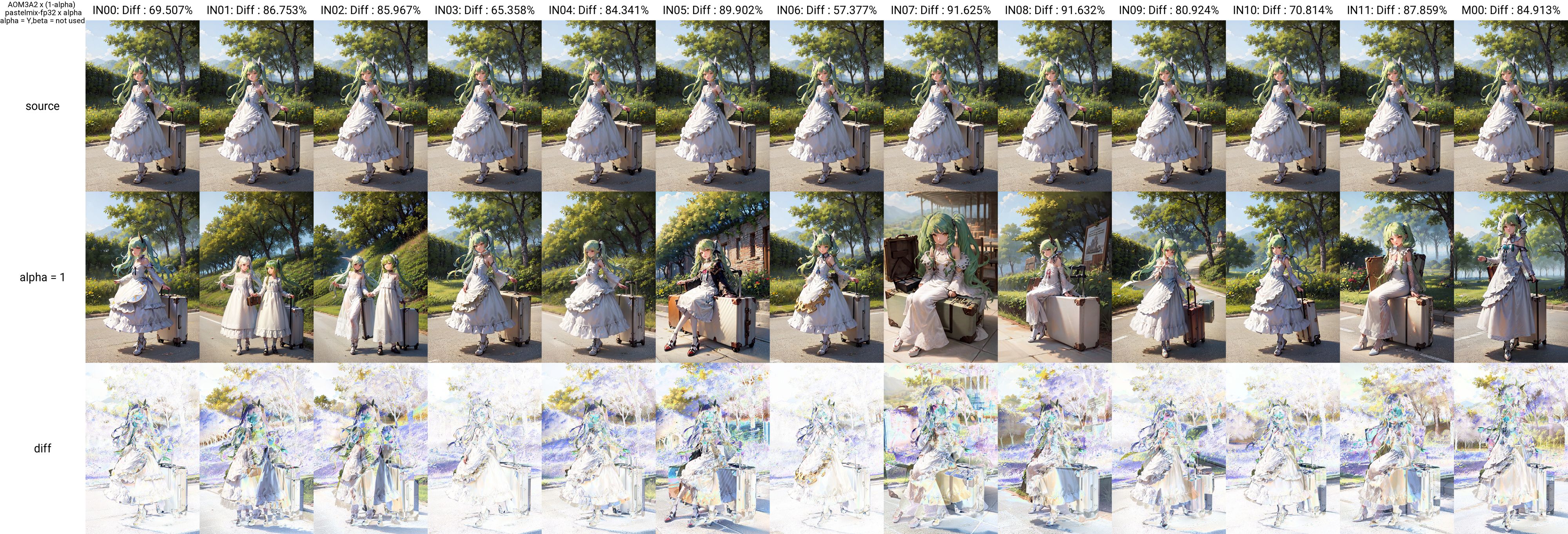

The following images show the result of changing the elements in the OUT05 layer. The leftmost one is without merging, the second one is all the OUT05 layers (i.e., normal block-by-block merging), and the rest are element merging. As shown in the table below, there are several more elements in attn2, etc.

|

||||||

|

|

||||||

|

## Usage

|

||||||

|

Note that elemental merging is effective for both normal and block-by-block merging, and is computed last, so it will overwrite values specified for block-by-block merging.

|

||||||

|

|

||||||

|

Set in Elemental Merge. Note that if text is set here, it will be automatically adapted. Each element is listed in the table below, but it is not necessary to enter the full name of each element.

|

||||||

|

You can check to see if the effect is properly applied by activating "print change" check. If this check is enabled, the applied elements will be displayed on the command prompt screen during the merge.

|

||||||

|

|

||||||

|

### Format

|

||||||

|

Bloks:Element:Ratio, Bloks:Element:Ratio,...

|

||||||

|

or

|

||||||

|

Bloks:Element:Ratio

|

||||||

|

Bloks:Element:Ratio

|

||||||

|

Bloks:Element:Ratio

|

||||||

|

|

||||||

|

Multiple specifications can be specified by separating them with commas or newlines. Commas and newlines may be mixed.

|

||||||

|

Bloks can be specified in uppercase from BASE,IN00-M00-OUT11. If left blank, all Bloks will be applied. Multiple Bloks can be specified by separating them with a space.

|

||||||

|

Similarly, multiple elements can be specified by separating them with a space.

|

||||||

|

Partial matching is used, so for example, typing "attn" will change both attn1 and attn2, and typing "attn2" will change only attn2. If you want to specify more details, enter "attn2.to_out" and so on.

|

||||||

|

|

||||||

|

OUT03 OUT04 OUT05:attn2 attn1.to_out:0.5

|

||||||

|

|

||||||

|

the ratio of elements containing attn2 and attn1.to_out in the OUT03, OUT04 and OUT05 layers will be 0.5.

|

||||||

|

If the element column is left blank, all elements in the specified Blocks will change, and the effect will be the same as a block-by-block merge.

|

||||||

|

If there are duplicate specifications, the one entered later takes precedence.

|

||||||

|

|

||||||

|

OUT06:attn:0.5,OUT06:attn2.to_k:0.2

|

||||||

|

|

||||||

|

is entered, attn other than attn2.to_k in the OUT06 layer will be 0.5, and only attn2.to_k will be 0.2.

|

||||||

|

|

||||||

|

You can invert the effect by first entering NOT.

|

||||||

|

This can be set by Blocks and Element.

|

||||||

|

|

||||||

|

NOT OUT04:attn:1

|

||||||

|

|

||||||

|

will set the ratio 1 to the attn of all Blocks except the OUT04 layer.

|

||||||

|

|

||||||

|

OUT05:NOT attn proj:0.2

|

||||||

|

|

||||||

|

will set all Blocks except attn and proj in the OUT05 layer to 0.2.

|

||||||

|

|

||||||

|

## XY plot

|

||||||

|

Several XY plots for elemental merge are available. Input examples can be found in sample.txt.

|

||||||

|

#### elemental

|

||||||

|

Creates XY plots for multiple elemental merges. Elements should be separated from each other by blank lines.

|

||||||

|

The following image is the result of executing sample1 of sample.txt.

|

||||||

|

|

||||||

|

#### pinpoint element

|

||||||

|

Creates an XY plot with different values for a specific element. Do the same with elements as with Pinpoint Blocks, but specify alpha for the opposite axis. Separate elements with a new line or comma.

|

||||||

|

The following image shows the result of running sample 3 of sample.txt.

|

||||||

|

|

||||||

|

|

||||||

|

#### effective elenemtal checker

|

||||||

|

Outputs the difference of each element's effective elenemtal checker. The gif.csv file will be created in the output folder under the ModelA and ModelB folders in the diff folder. If there are duplicate file names, rename and save the files, but it is recommended to rename the diff folder to an appropriate name because it is complicated when the number of files increases.

|

||||||

|

Separate the files with a new line or comma. Use alpha for the opposite axis and enter a single value. This is useful to see the effect of an element, but it is also possible to see the effect of a hierarchy by not specifying an element, so you may use it that way more often.

|

||||||

|

The following image shows the result of running sample5 of sample.txt.

|

||||||

|

|

||||||

|

|

||||||

|

### List of elements

|

||||||

|

Basically, it seems that attn is responsible for the face and clothing information. The IN07, OUT03, OUT04, and OUT05 layers seem to have a particularly strong influence. It does not seem to make sense to change the same element in multiple Blocks at the same time, since the degree of influence often differs depending on the Blocks.

|

||||||

|

No element exists where it is marked null.

|

||||||

|

|

||||||

|

||IN00|IN01|IN02|IN03|IN04|IN05|IN06|IN07|IN08|IN09|IN10|IN11|M00|M00|OUT00|OUT01|OUT02|OUT03|OUT04|OUT05|OUT06|OUT07|OUT08|OUT09|OUT10|OUT11

|

||||||

|

|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|

|

||||||

|

op.bias|null|null|null||null|null||null|null||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null

|

||||||

|

op.weight|null|null|null||null|null||null|null||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null

|

||||||

|

emb_layers.1.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

emb_layers.1.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.0.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.0.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.2.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.2.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.0.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.0.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.3.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.3.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

skip_connection.bias|null|||null||null|null|||null|null|null|null|null||||||||||||

|

||||||

|

skip_connection.weight|null|||null||null|null|||null|null|null|null|null||||||||||||

|

||||||

|

norm.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

norm.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_in.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_in.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_out.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_out.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_k.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_out.0.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_out.0.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_q.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_v.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_k.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_out.0.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_out.0.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_q.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_v.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.0.proj.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.0.proj.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.2.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.2.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm1.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm1.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm2.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm2.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm3.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm3.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

conv.bias|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null||null|null||null|null||null|null|null

|

||||||

|

conv.weight|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null||null|null||null|null||null|null|null

|

||||||

|

0.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

0.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

2.bias|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

2.weight|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.0.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.0.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.2.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.2.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

|

@ -0,0 +1,118 @@

|

||||||

|

# Elemental Merge

|

||||||

|

- 階層マージを越えた階層マージです

|

||||||

|

|

||||||

|

階層マージでは25の階層ごとにマージ比率を変えることができますが、階層もまた複数の要素で構成されており、要素ごとに比率を変えることも原理的には可能です。可能ですが、要素の数は600以上にもなり人の手で扱えるのかは疑問でしたが実装してみました。いきなり要素ごとのマージは推奨されません。階層マージにおいて解決不可能な問題が生じたときに最終調節手段として使うことをおすすめします。

|

||||||

|

次の画像はOUT05層の要素を変えた結果です。左端はマージ無し。2番目はOUT05層すべて(つまりは普通の階層マージ),以降が要素マージです。下表のとおり、attn2などの中にはさらに複数の要素が含まれます。

|

||||||

|

|

||||||

|

|

||||||

|

## 使い方

|

||||||

|

要素マージは通常マージ、階層マージ時どちらの場合でも有効で、最後に計算されるために、階層マージで指定した値は上書きされることに注意してください。

|

||||||

|

|

||||||

|

Elemental Mergeで設定します。ここにテキストが設定されていると自動的に適応されるので注意して下さい。各要素は下表のとおりですが、各要素のフルネームを入力する必要はありません。

|

||||||

|

ちゃんと効果が現れるかどうかはprint changeチェックを有効にすることで確認できます。このチェックを有効にするとマージ時にコマンドプロンプト画面に適用された要素が表示されます。

|

||||||

|

部分一致で指定が可能です。

|

||||||

|

### 書式

|

||||||

|

階層:要素:比率,階層:要素:比率,...

|

||||||

|

または

|

||||||

|

階層:要素:比率

|

||||||

|

階層:要素:比率

|

||||||

|

階層:要素:比率

|

||||||

|

|

||||||

|

カンマまたは改行で区切ることで複数の指定が可能です。カンマと改行は混在しても問題ありません。

|

||||||

|

階層は大文字でBASE,IN00-M00-OUT11まで指定でます。空欄にするとすべての階層に適用されます。スペースで区切ることで複数の階層を指定できます。

|

||||||

|

要素も同様でスペースで区切ることで複数の要素を指定できます。

|

||||||

|

部分一致で判断するので、例えば「attn」と入力するとattn1,attn2両方が変化します。「attn2」の場合はattn2のみ。さらに細かく指定したい場合は「attn2.to_out」などと入力します。

|

||||||

|

|

||||||

|

OUT03 OUT04 OUT05:attn2 attn1.to_out:0.5

|

||||||

|

|

||||||

|

と入力すると、OUT03,OUT04,OUT05層のattn2が含まれる要素及びattn1.to_outの比率が0.5になります。

|

||||||

|

要素の欄を空欄にすると指定階層のすべての要素が変わり、階層マージと同じ効果になります。

|

||||||

|

指定が重複する場合、後に入力された方が優先されます。

|

||||||

|

|

||||||

|

OUT06:attn:0.5,OUT06:attn2.to_k:0.2

|

||||||

|

|

||||||

|

と入力した場合、OUT06層のattn2.to_k以外のattnは0.5,attn2.to_kのみ0.2となります。

|

||||||

|

|

||||||

|

最初にNOTと入力することで効果範囲を反転させることができます。

|

||||||

|

これは階層・要素別に設定できます。

|

||||||

|

|

||||||

|

NOT OUT04:attn:1

|

||||||

|

|

||||||

|

と入力するとOUT04層以外の層のattnに比率1が設定されます。

|

||||||

|

|

||||||

|

OUT05:NOT attn proj:0.2

|

||||||

|

|

||||||

|

とすると、OUT05層のattnとproj以外の層が0.2になります。

|

||||||

|

|

||||||

|

## XY plot

|

||||||

|

elemental用のXY plotを複数用意しています。入力例はsample.txtにあります。

|

||||||

|

#### elemental

|

||||||

|

複数の要素マージについてXY plotを作成します。要素同士は空行で区切ってください。

|

||||||

|

トップ画像はsample.txtのsample1を実行した結果です。

|

||||||

|

[!](https://github.com/hako-mikan/sd-webui-supermerger/blob/images/sample1.jpg)

|

||||||

|

#### pinpoint element

|

||||||

|

特定の要素について値を変えてXY plotを作成します。pinpoint Blocksと同じことを要素で行います。反対の軸にはalphaを指定してください。要素同士は改行またはカンマで区切ります。

|

||||||

|

以下の画像はsample.txtのsample3を実行した結果です。

|

||||||

|

|

||||||

|

|

||||||

|

#### effective elenemtal checker

|

||||||

|

各要素の影響度を差分として出力します。オプションでanime gif、csvファイルを出力できます。gif.csvファイルはoutputフォルダにModelAとModelBから作られるフォルダ下に作成されるdiffフォルダに作成されます。ファイル名が重複する場合名前を変えて保存しますが、増えてくるとややこしいのでdiffフォルダを適当な名前に変えることをおすすめします。

|

||||||

|

改行またはカンマで区切ります。反対の軸はalphaを使用し、単一の値を入力してください。これは要素の効果を見るのにも有効ですが、要素を指定しないことで階層の効果を見ることも可能なので、そちらの使い方をする場合が多いかもしれません。

|

||||||

|

以下の画像はsample.txtのsample5を実行した結果です。

|

||||||

|

|

||||||

|

|

||||||

|

### 要素一覧

|

||||||

|

基本的にはattnが顔や服装の情報を担っているようです。特にIN07,OUT03,OUT04,OUT05層の影響度が強いようです。階層によって影響度が異なることが多いので複数の層の同じ要素を同時に変化させることは意味が無いように思えます。

|

||||||

|

nullと書かれた場所には要素が存在しません。

|

||||||

|

||IN00|IN01|IN02|IN03|IN04|IN05|IN06|IN07|IN08|IN09|IN10|IN11|M00|M00|OUT00|OUT01|OUT02|OUT03|OUT04|OUT05|OUT06|OUT07|OUT08|OUT09|OUT10|OUT11

|

||||||

|

|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|-|

|

||||||

|

op.bias|null|null|null||null|null||null|null||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null

|

||||||

|

op.weight|null|null|null||null|null||null|null||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null

|

||||||

|

emb_layers.1.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

emb_layers.1.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.0.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.0.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.2.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

in_layers.2.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.0.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.0.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.3.bias|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

out_layers.3.weight|null|||null|||null|||null|null|||||||||||||||

|

||||||

|

skip_connection.bias|null|||null||null|null|||null|null|null|null|null||||||||||||

|

||||||

|

skip_connection.weight|null|||null||null|null|||null|null|null|null|null||||||||||||

|

||||||

|

norm.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

norm.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_in.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_in.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_out.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

proj_out.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_k.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_out.0.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_out.0.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_q.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn1.to_v.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_k.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_out.0.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_out.0.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_q.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.attn2.to_v.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.0.proj.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.0.proj.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.2.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.ff.net.2.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm1.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm1.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm2.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm2.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm3.bias|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

transformer_blocks.0.norm3.weight|null|||null|||null|||null|null|null||null|null|null|null|||||||||

|

||||||

|

conv.bias|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null||null|null||null|null||null|null|null

|

||||||

|

conv.weight|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null||null|null||null|null||null|null|null

|

||||||

|

0.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

0.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

2.bias|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

2.weight|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.0.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.0.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.2.weight||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

time_embed.2.bias||null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|null|

|

||||||

|

|

@ -0,0 +1,95 @@

|

||||||

|

eamples of XY plot

|

||||||

|

***************************************************************

|

||||||

|

for elemental

|

||||||

|

Each value is separated by a blank line/空行で区切ります

|

||||||

|

Element merge values are comma or newline delimited/要素マージの値はカンマまたは改行区切りです

|

||||||

|

Commas and newlines can also be mixed/カンマと改行の混在も可能です

|

||||||

|

You can insert no elemental by inserting two blank lines at the beginning/最初に空行をふたつ入れるとelementalのない状態を挿入できます

|

||||||

|

|

||||||

|

**sample1*******************************************************

|

||||||

|

|

||||||

|

|

||||||

|

OUT05::1

|

||||||

|

|

||||||

|

OUT05:layers:1

|

||||||

|

|

||||||

|

OUT05:attn1:1

|

||||||

|

|

||||||

|

OUT05:attn2:1

|

||||||

|

|

||||||

|

OUT05:ff.net:1

|

||||||

|

**sample2*******************************************************

|

||||||

|

|

||||||

|

|

||||||

|

IN07:attn1:0.5,IN07:attn2:0.2

|

||||||

|

OUT03:NOT attn1.to_k.weight:0.5

|

||||||

|

OUT03:attn1.to_k.weight:0.3

|

||||||

|

|

||||||

|

OUT04:NOT ff.net:0.5

|

||||||

|

OUT04:attn1.to_k.weight:0.3

|

||||||

|

OUT04:attn1.to_out.0.weight:0.3

|

||||||

|

|

||||||

|

OUT05:NOT ff.net:0.5

|

||||||

|

OUT05:attn1.to_k.weight:0.3

|

||||||

|

OUT05:attn1.to_out.0.weight:0.3

|

||||||

|

***************************************************************

|

||||||

|

***************************************************************

|

||||||

|

for pinpoint element

|

||||||

|

Each value is separated by comma or a blank line/カンマまたは改行で区切ります

|

||||||

|

do not enter ratio/ratioは入力しません

|

||||||

|

**sample3******************************************************

|

||||||

|

IN07:,IN07:layers,IN07:attn1,IN07:attn2,IN07:ff.net

|

||||||

|

**sample4******************************************************

|

||||||

|

OUT04:NOT attn2.to_q

|

||||||

|

OUT04:attn1.to_k.weight

|

||||||

|

OUT04:ff.net

|

||||||

|

OUT04:attn

|

||||||

|

***************************************************************

|

||||||

|

***************************************************************

|

||||||

|

for effective elemental cheker

|

||||||

|

|

||||||

|

Examine the effect of each block/各階層の影響度を調べる

|

||||||

|

Output results can be split by inserting "|"/途中「|」を挿入することで出力結果を分割できます。

|

||||||

|

**sample5*************************************************************

|

||||||

|

IN00:,IN01:,IN02:,IN03:,IN04:,IN05:,IN06:,IN07:,IN08:,IN09:,IN10:,IN11:,M00:|OUT00:,OUT01:,OUT02:,OUT03:,OUT04:,OUT05:,OUT06:,OUT07:,OUT08:,OUT09:,OUT10:,OUT11:

|

||||||

|

|

||||||

|

Examine the effect of all elements in the IN01/IN01層のすべての要素の影響度を調べる

|

||||||

|

Below that corresponds to IN02 or later/その下はIN02以降に対応

|

||||||

|

**sample6*************************************************************

|

||||||

|

IN01:emb_layers.1.bias,IN01:emb_layers.1.weight,IN01:in_layers.0.bias,IN01:in_layers.0.weight,IN01:in_layers.2.bias,IN01:in_layers.2.weight,IN01:out_layers.0.bias,IN01:out_layers.0.weight,IN01:out_layers.3.bias,IN01:out_layers.3.weight,IN01:skip_connection.bias,IN01:skip_connection.weight,IN01:norm.bias,IN01:norm.weight,IN01:proj_in.bias,IN01:proj_in.weight,IN01:proj_out.bias,IN01:proj_out.weight,IN01:transformer_blocks.0.attn1.to_k.weight,IN01:transformer_blocks.0.attn1.to_out.0.bias,IN01:transformer_blocks.0.attn1.to_out.0.weight,IN01:transformer_blocks.0.attn1.to_q.weight,IN01:transformer_blocks.0.attn1.to_v.weight,IN01:transformer_blocks.0.attn2.to_k.weight,IN01:transformer_blocks.0.attn2.to_out.0.bias,IN01:transformer_blocks.0.attn2.to_out.0.weight,IN01:transformer_blocks.0.attn2.to_q.weight,IN01:transformer_blocks.0.attn2.to_v.weight,IN01:transformer_blocks.0.ff.net.0.proj.bias,IN01:transformer_blocks.0.ff.net.0.proj.weight,IN01:transformer_blocks.0.ff.net.2.bias,IN01:transformer_blocks.0.ff.net.2.weight,IN01:transformer_blocks.0.norm1.bias,IN01:transformer_blocks.0.norm1.weight,IN01:transformer_blocks.0.norm2.bias,IN01:transformer_blocks.0.norm2.weight,IN01:transformer_blocks.0.norm3.bias,IN01:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN02:emb_layers.1.bias,IN02:emb_layers.1.weight,IN02:in_layers.0.bias,IN02:in_layers.0.weight,IN02:in_layers.2.bias,IN02:in_layers.2.weight,IN02:out_layers.0.bias,IN02:out_layers.0.weight,IN02:out_layers.3.bias,IN02:out_layers.3.weight,IN02:skip_connection.bias,IN02:skip_connection.weight,IN02:norm.bias,IN02:norm.weight,IN02:proj_in.bias,IN02:proj_in.weight,IN02:proj_out.bias,IN02:proj_out.weight,IN02:transformer_blocks.0.attn1.to_k.weight,IN02:transformer_blocks.0.attn1.to_out.0.bias,IN02:transformer_blocks.0.attn1.to_out.0.weight,IN02:transformer_blocks.0.attn1.to_q.weight,IN02:transformer_blocks.0.attn1.to_v.weight,IN02:transformer_blocks.0.attn2.to_k.weight,IN02:transformer_blocks.0.attn2.to_out.0.bias,IN02:transformer_blocks.0.attn2.to_out.0.weight,IN02:transformer_blocks.0.attn2.to_q.weight,IN02:transformer_blocks.0.attn2.to_v.weight,IN02:transformer_blocks.0.ff.net.0.proj.bias,IN02:transformer_blocks.0.ff.net.0.proj.weight,IN02:transformer_blocks.0.ff.net.2.bias,IN02:transformer_blocks.0.ff.net.2.weight,IN02:transformer_blocks.0.norm1.bias,IN02:transformer_blocks.0.norm1.weight,IN02:transformer_blocks.0.norm2.bias,IN02:transformer_blocks.0.norm2.weight,IN02:transformer_blocks.0.norm3.bias,IN02:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN00:bias,IN00:weight,IN03:op.bias,IN03:op.weight,IN06:op.bias,IN06:op.weight,IN09:op.bias,IN09:op.weight

|

||||||

|

|

||||||

|

IN04:emb_layers.1.bias,IN04:emb_layers.1.weight,IN04:in_layers.0.bias,IN04:in_layers.0.weight,IN04:in_layers.2.bias,IN04:in_layers.2.weight,IN04:out_layers.0.bias,IN04:out_layers.0.weight,IN04:out_layers.3.bias,IN04:out_layers.3.weight,IN04:skip_connection.bias,IN04:skip_connection.weight,IN04:norm.bias,IN04:norm.weight,IN04:proj_in.bias,IN04:proj_in.weight,IN04:proj_out.bias,IN04:proj_out.weight,IN04:transformer_blocks.0.attn1.to_k.weight,IN04:transformer_blocks.0.attn1.to_out.0.bias,IN04:transformer_blocks.0.attn1.to_out.0.weight,IN04:transformer_blocks.0.attn1.to_q.weight,IN04:transformer_blocks.0.attn1.to_v.weight,IN04:transformer_blocks.0.attn2.to_k.weight,IN04:transformer_blocks.0.attn2.to_out.0.bias,IN04:transformer_blocks.0.attn2.to_out.0.weight,IN04:transformer_blocks.0.attn2.to_q.weight,IN04:transformer_blocks.0.attn2.to_v.weight,IN04:transformer_blocks.0.ff.net.0.proj.bias,IN04:transformer_blocks.0.ff.net.0.proj.weight,IN04:transformer_blocks.0.ff.net.2.bias,IN04:transformer_blocks.0.ff.net.2.weight,IN04:transformer_blocks.0.norm1.bias,IN04:transformer_blocks.0.norm1.weight,IN04:transformer_blocks.0.norm2.bias,IN04:transformer_blocks.0.norm2.weight,IN04:transformer_blocks.0.norm3.bias,IN04:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN05:emb_layers.1.bias,IN05:emb_layers.1.weight,IN05:in_layers.0.bias,IN05:in_layers.0.weight,IN05:in_layers.2.bias,IN05:in_layers.2.weight,IN05:out_layers.0.bias,IN05:out_layers.0.weight,IN05:out_layers.3.bias,IN05:out_layers.3.weight,IN05:skip_connection.bias,IN05:skip_connection.weight,IN05:norm.bias,IN05:norm.weight,IN05:proj_in.bias,IN05:proj_in.weight,IN05:proj_out.bias,IN05:proj_out.weight,IN05:transformer_blocks.0.attn1.to_k.weight,IN05:transformer_blocks.0.attn1.to_out.0.bias,IN05:transformer_blocks.0.attn1.to_out.0.weight,IN05:transformer_blocks.0.attn1.to_q.weight,IN05:transformer_blocks.0.attn1.to_v.weight,IN05:transformer_blocks.0.attn2.to_k.weight,IN05:transformer_blocks.0.attn2.to_out.0.bias,IN05:transformer_blocks.0.attn2.to_out.0.weight,IN05:transformer_blocks.0.attn2.to_q.weight,IN05:transformer_blocks.0.attn2.to_v.weight,IN05:transformer_blocks.0.ff.net.0.proj.bias,IN05:transformer_blocks.0.ff.net.0.proj.weight,IN05:transformer_blocks.0.ff.net.2.bias,IN05:transformer_blocks.0.ff.net.2.weight,IN05:transformer_blocks.0.norm1.bias,IN05:transformer_blocks.0.norm1.weight,IN05:transformer_blocks.0.norm2.bias,IN05:transformer_blocks.0.norm2.weight,IN05:transformer_blocks.0.norm3.bias,IN05:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN07:emb_layers.1.bias,IN07:emb_layers.1.weight,IN07:in_layers.0.bias,IN07:in_layers.0.weight,IN07:in_layers.2.bias,IN07:in_layers.2.weight,IN07:out_layers.0.bias,IN07:out_layers.0.weight,IN07:out_layers.3.bias,IN07:out_layers.3.weight,IN07:skip_connection.bias,IN07:skip_connection.weight,IN07:norm.bias,IN07:norm.weight,IN07:proj_in.bias,IN07:proj_in.weight,IN07:proj_out.bias,IN07:proj_out.weight,IN07:transformer_blocks.0.attn1.to_k.weight,IN07:transformer_blocks.0.attn1.to_out.0.bias,IN07:transformer_blocks.0.attn1.to_out.0.weight,IN07:transformer_blocks.0.attn1.to_q.weight,IN07:transformer_blocks.0.attn1.to_v.weight,IN07:transformer_blocks.0.attn2.to_k.weight,IN07:transformer_blocks.0.attn2.to_out.0.bias,IN07:transformer_blocks.0.attn2.to_out.0.weight,IN07:transformer_blocks.0.attn2.to_q.weight,IN07:transformer_blocks.0.attn2.to_v.weight,IN07:transformer_blocks.0.ff.net.0.proj.bias,IN07:transformer_blocks.0.ff.net.0.proj.weight,IN07:transformer_blocks.0.ff.net.2.bias,IN07:transformer_blocks.0.ff.net.2.weight,IN07:transformer_blocks.0.norm1.bias,IN07:transformer_blocks.0.norm1.weight,IN07:transformer_blocks.0.norm2.bias,IN07:transformer_blocks.0.norm2.weight,IN07:transformer_blocks.0.norm3.bias,IN07:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN08:emb_layers.1.bias,IN08:emb_layers.1.weight,IN08:in_layers.0.bias,IN08:in_layers.0.weight,IN08:in_layers.2.bias,IN08:in_layers.2.weight,IN08:out_layers.0.bias,IN08:out_layers.0.weight,IN08:out_layers.3.bias,IN08:out_layers.3.weight,IN08:skip_connection.bias,IN08:skip_connection.weight,IN08:norm.bias,IN08:norm.weight,IN08:proj_in.bias,IN08:proj_in.weight,IN08:proj_out.bias,IN08:proj_out.weight,IN08:transformer_blocks.0.attn1.to_k.weight,IN08:transformer_blocks.0.attn1.to_out.0.bias,IN08:transformer_blocks.0.attn1.to_out.0.weight,IN08:transformer_blocks.0.attn1.to_q.weight,IN08:transformer_blocks.0.attn1.to_v.weight,IN08:transformer_blocks.0.attn2.to_k.weight,IN08:transformer_blocks.0.attn2.to_out.0.bias,IN08:transformer_blocks.0.attn2.to_out.0.weight,IN08:transformer_blocks.0.attn2.to_q.weight,IN08:transformer_blocks.0.attn2.to_v.weight,IN08:transformer_blocks.0.ff.net.0.proj.bias,IN08:transformer_blocks.0.ff.net.0.proj.weight,IN08:transformer_blocks.0.ff.net.2.bias,IN08:transformer_blocks.0.ff.net.2.weight,IN08:transformer_blocks.0.norm1.bias,IN08:transformer_blocks.0.norm1.weight,IN08:transformer_blocks.0.norm2.bias,IN08:transformer_blocks.0.norm2.weight,IN08:transformer_blocks.0.norm3.bias,IN08:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

IN10:emb_layers.1.bias,IN10:emb_layers.1.weight,IN10:in_layers.0.bias,IN10:in_layers.0.weight,IN10:in_layers.2.bias,IN10:in_layers.2.weight,IN10:out_layers.0.bias,IN10:out_layers.0.weight,IN10:out_layers.3.bias,IN10:out_layers.3.weight,IN11:emb_layers.1.bias,IN11:emb_layers.1.weight,IN11:in_layers.0.bias,IN11:in_layers.0.weight,IN11:in_layers.2.bias,IN11:in_layers.2.weight,IN11:out_layers.0.bias,IN11:out_layers.0.weight,IN11:out_layers.3.bias,IN11:out_layers.3.weight

|

||||||

|

|

||||||

|

M00:0.emb_layers.1.bias,M00:0.emb_layers.1.weight,M00:0.in_layers.0.bias,M00:0.in_layers.0.weight,M00:0.in_layers.2.bias,M00:0.in_layers.2.weight,M00:0.out_layers.0.bias,M00:0.out_layers.0.weight,M00:0.out_layers.3.bias,M00:0.out_layers.3.weight,M00:1.norm.bias,M00:1.norm.weight,M00:1.proj_in.bias,M00:1.proj_in.weight,M00:1.proj_out.bias,M00:1.proj_out.weight,M00:1.transformer_blocks.0.attn1.to_k.weight,M00:1.transformer_blocks.0.attn1.to_out.0.bias,M00:1.transformer_blocks.0.attn1.to_out.0.weight,M00:1.transformer_blocks.0.attn1.to_q.weight,M00:1.transformer_blocks.0.attn1.to_v.weight,M00:1.transformer_blocks.0.attn2.to_k.weight,M00:1.transformer_blocks.0.attn2.to_out.0.bias,M00:1.transformer_blocks.0.attn2.to_out.0.weight,M00:1.transformer_blocks.0.attn2.to_q.weight,M00:1.transformer_blocks.0.attn2.to_v.weight,M00:1.transformer_blocks.0.ff.net.0.proj.bias,M00:1.transformer_blocks.0.ff.net.0.proj.weight,M00:1.transformer_blocks.0.ff.net.2.bias,M00:1.transformer_blocks.0.ff.net.2.weight,M00:1.transformer_blocks.0.norm1.bias,M00:1.transformer_blocks.0.norm1.weight,M00:1.transformer_blocks.0.norm2.bias,M00:1.transformer_blocks.0.norm2.weight,M00:1.transformer_blocks.0.norm3.bias,M00:1.transformer_blocks.0.norm3.weight,M00:2.emb_layers.1.bias,M00:2.emb_layers.1.weight,M00:2.in_layers.0.bias,M00:2.in_layers.0.weight,M00:2.in_layers.2.bias,M00:2.in_layers.2.weight,M00:2.out_layers.0.bias,M00:2.out_layers.0.weight,M00:2.out_layers.3.bias,M00:2.out_layers.3.weight

|

||||||

|

|

||||||

|

OUT00:emb_layers.1.bias,OUT00:emb_layers.1.weight,OUT00:in_layers.0.bias,OUT00:in_layers.0.weight,OUT00:in_layers.2.bias,OUT00:in_layers.2.weight,OUT00:out_layers.0.bias,OUT00:out_layers.0.weight,OUT00:out_layers.3.bias,OUT00:out_layers.3.weight,OUT00:skip_connection.bias,OUT00:skip_connection.weight,OUT01:emb_layers.1.bias,OUT01:emb_layers.1.weight,OUT01:in_layers.0.bias,OUT01:in_layers.0.weight,OUT01:in_layers.2.bias,OUT01:in_layers.2.weight,OUT01:out_layers.0.bias,OUT01:out_layers.0.weight,OUT01:out_layers.3.bias,OUT01:out_layers.3.weight,OUT01:skip_connection.bias,OUT01:skip_connection.weight,OUT02:emb_layers.1.bias,OUT02:emb_layers.1.weight,OUT02:in_layers.0.bias,OUT02:in_layers.0.weight,OUT02:in_layers.2.bias,OUT02:in_layers.2.weight,OUT02:out_layers.0.bias,OUT02:out_layers.0.weight,OUT02:out_layers.3.bias,OUT02:out_layers.3.weight,OUT02:skip_connection.bias,OUT02:skip_connection.weight,OUT02:conv.bias,OUT02:conv.weight

|

||||||

|

|

||||||

|

OUT03:emb_layers.1.bias,OUT03:emb_layers.1.weight,OUT03:in_layers.0.bias,OUT03:in_layers.0.weight,OUT03:in_layers.2.bias,OUT03:in_layers.2.weight,OUT03:out_layers.0.bias,OUT03:out_layers.0.weight,OUT03:out_layers.3.bias,OUT03:out_layers.3.weight,OUT03:skip_connection.bias,OUT03:skip_connection.weight,OUT03:norm.bias,OUT03:norm.weight,OUT03:proj_in.bias,OUT03:proj_in.weight,OUT03:proj_out.bias,OUT03:proj_out.weight,OUT03:transformer_blocks.0.attn1.to_k.weight,OUT03:transformer_blocks.0.attn1.to_out.0.bias,OUT03:transformer_blocks.0.attn1.to_out.0.weight,OUT03:transformer_blocks.0.attn1.to_q.weight,OUT03:transformer_blocks.0.attn1.to_v.weight,OUT03:transformer_blocks.0.attn2.to_k.weight,OUT03:transformer_blocks.0.attn2.to_out.0.bias,OUT03:transformer_blocks.0.attn2.to_out.0.weight,OUT03:transformer_blocks.0.attn2.to_q.weight,OUT03:transformer_blocks.0.attn2.to_v.weight,OUT03:transformer_blocks.0.ff.net.0.proj.bias,OUT03:transformer_blocks.0.ff.net.0.proj.weight,OUT03:transformer_blocks.0.ff.net.2.bias,OUT03:transformer_blocks.0.ff.net.2.weight,OUT03:transformer_blocks.0.norm1.bias,OUT03:transformer_blocks.0.norm1.weight,OUT03:transformer_blocks.0.norm2.bias,OUT03:transformer_blocks.0.norm2.weight,OUT03:transformer_blocks.0.norm3.bias,OUT03:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

OUT04:emb_layers.1.bias,OUT04:emb_layers.1.weight,OUT04:in_layers.0.bias,OUT04:in_layers.0.weight,OUT04:in_layers.2.bias,OUT04:in_layers.2.weight,OUT04:out_layers.0.bias,OUT04:out_layers.0.weight,OUT04:out_layers.3.bias,OUT04:out_layers.3.weight,OUT04:skip_connection.bias,OUT04:skip_connection.weight,OUT04:norm.bias,OUT04:norm.weight,OUT04:proj_in.bias,OUT04:proj_in.weight,OUT04:proj_out.bias,OUT04:proj_out.weight,OUT04:transformer_blocks.0.attn1.to_k.weight,OUT04:transformer_blocks.0.attn1.to_out.0.bias,OUT04:transformer_blocks.0.attn1.to_out.0.weight,OUT04:transformer_blocks.0.attn1.to_q.weight,OUT04:transformer_blocks.0.attn1.to_v.weight,OUT04:transformer_blocks.0.attn2.to_k.weight,OUT04:transformer_blocks.0.attn2.to_out.0.bias,OUT04:transformer_blocks.0.attn2.to_out.0.weight,OUT04:transformer_blocks.0.attn2.to_q.weight,OUT04:transformer_blocks.0.attn2.to_v.weight,OUT04:transformer_blocks.0.ff.net.0.proj.bias,OUT04:transformer_blocks.0.ff.net.0.proj.weight,OUT04:transformer_blocks.0.ff.net.2.bias,OUT04:transformer_blocks.0.ff.net.2.weight,OUT04:transformer_blocks.0.norm1.bias,OUT04:transformer_blocks.0.norm1.weight,OUT04:transformer_blocks.0.norm2.bias,OUT04:transformer_blocks.0.norm2.weight,OUT04:transformer_blocks.0.norm3.bias,OUT04:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

OUT05:emb_layers.1.bias,OUT05:emb_layers.1.weight,OUT05:in_layers.0.bias,OUT05:in_layers.0.weight,OUT05:in_layers.2.bias,OUT05:in_layers.2.weight,OUT05:out_layers.0.bias,OUT05:out_layers.0.weight,OUT05:out_layers.3.bias,OUT05:out_layers.3.weight,OUT05:skip_connection.bias,OUT05:skip_connection.weight,OUT05:norm.bias,OUT05:norm.weight,OUT05:proj_in.bias,OUT05:proj_in.weight,OUT05:proj_out.bias,OUT05:proj_out.weight,OUT05:transformer_blocks.0.attn1.to_k.weight,OUT05:transformer_blocks.0.attn1.to_out.0.bias,OUT05:transformer_blocks.0.attn1.to_out.0.weight,OUT05:transformer_blocks.0.attn1.to_q.weight,OUT05:transformer_blocks.0.attn1.to_v.weight,OUT05:transformer_blocks.0.attn2.to_k.weight,OUT05:transformer_blocks.0.attn2.to_out.0.bias,OUT05:transformer_blocks.0.attn2.to_out.0.weight,OUT05:transformer_blocks.0.attn2.to_q.weight,OUT05:transformer_blocks.0.attn2.to_v.weight,OUT05:transformer_blocks.0.ff.net.0.proj.bias,OUT05:transformer_blocks.0.ff.net.0.proj.weight,OUT05:transformer_blocks.0.ff.net.2.bias,OUT05:transformer_blocks.0.ff.net.2.weight,OUT05:transformer_blocks.0.norm1.bias,OUT05:transformer_blocks.0.norm1.weight,OUT05:transformer_blocks.0.norm2.bias,OUT05:transformer_blocks.0.norm2.weight,OUT05:transformer_blocks.0.norm3.bias,OUT05:transformer_blocks.0.norm3.weight,OUT05:conv.bias,OUT05:conv.weight

|

||||||

|

|

||||||

|

,OUT06:emb_layers.1.bias,OUT06:emb_layers.1.weight,OUT06:in_layers.0.bias,OUT06:in_layers.0.weight,OUT06:in_layers.2.bias,OUT06:in_layers.2.weight,OUT06:out_layers.0.bias,OUT06:out_layers.0.weight,OUT06:out_layers.3.bias,OUT06:out_layers.3.weight,OUT06:skip_connection.bias,OUT06:skip_connection.weight,OUT06:norm.bias,OUT06:norm.weight,OUT06:proj_in.bias,OUT06:proj_in.weight,OUT06:proj_out.bias,OUT06:proj_out.weight,OUT06:transformer_blocks.0.attn1.to_k.weight,OUT06:transformer_blocks.0.attn1.to_out.0.bias,OUT06:transformer_blocks.0.attn1.to_out.0.weight,OUT06:transformer_blocks.0.attn1.to_q.weight,OUT06:transformer_blocks.0.attn1.to_v.weight,OUT06:transformer_blocks.0.attn2.to_k.weight,OUT06:transformer_blocks.0.attn2.to_out.0.bias,OUT06:transformer_blocks.0.attn2.to_out.0.weight,OUT06:transformer_blocks.0.attn2.to_q.weight,OUT06:transformer_blocks.0.attn2.to_v.weight,OUT06:transformer_blocks.0.ff.net.0.proj.bias,OUT06:transformer_blocks.0.ff.net.0.proj.weight,OUT06:transformer_blocks.0.ff.net.2.bias,OUT06:transformer_blocks.0.ff.net.2.weight,OUT06:transformer_blocks.0.norm1.bias,OUT06:transformer_blocks.0.norm1.weight,OUT06:transformer_blocks.0.norm2.bias,OUT06:transformer_blocks.0.norm2.weight,OUT06:transformer_blocks.0.norm3.bias,OUT06:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

OUT07:emb_layers.1.bias,OUT07:emb_layers.1.weight,OUT07:in_layers.0.bias,OUT07:in_layers.0.weight,OUT07:in_layers.2.bias,OUT07:in_layers.2.weight,OUT07:out_layers.0.bias,OUT07:out_layers.0.weight,OUT07:out_layers.3.bias,OUT07:out_layers.3.weight,OUT07:skip_connection.bias,OUT07:skip_connection.weight,OUT07:norm.bias,OUT07:norm.weight,OUT07:proj_in.bias,OUT07:proj_in.weight,OUT07:proj_out.bias,OUT07:proj_out.weight,OUT07:transformer_blocks.0.attn1.to_k.weight,OUT07:transformer_blocks.0.attn1.to_out.0.bias,OUT07:transformer_blocks.0.attn1.to_out.0.weight,OUT07:transformer_blocks.0.attn1.to_q.weight,OUT07:transformer_blocks.0.attn1.to_v.weight,OUT07:transformer_blocks.0.attn2.to_k.weight,OUT07:transformer_blocks.0.attn2.to_out.0.bias,OUT07:transformer_blocks.0.attn2.to_out.0.weight,OUT07:transformer_blocks.0.attn2.to_q.weight,OUT07:transformer_blocks.0.attn2.to_v.weight,OUT07:transformer_blocks.0.ff.net.0.proj.bias,OUT07:transformer_blocks.0.ff.net.0.proj.weight,OUT07:transformer_blocks.0.ff.net.2.bias,OUT07:transformer_blocks.0.ff.net.2.weight,OUT07:transformer_blocks.0.norm1.bias,OUT07:transformer_blocks.0.norm1.weight,OUT07:transformer_blocks.0.norm2.bias,OUT07:transformer_blocks.0.norm2.weight,OUT07:transformer_blocks.0.norm3.bias,OUT07:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

,OUT08:emb_layers.1.bias,OUT08:emb_layers.1.weight,OUT08:in_layers.0.bias,OUT08:in_layers.0.weight,OUT08:in_layers.2.bias,OUT08:in_layers.2.weight,OUT08:out_layers.0.bias,OUT08:out_layers.0.weight,OUT08:out_layers.3.bias,OUT08:out_layers.3.weight,OUT08:skip_connection.bias,OUT08:skip_connection.weight,OUT08:norm.bias,OUT08:norm.weight,OUT08:proj_in.bias,OUT08:proj_in.weight,OUT08:proj_out.bias,OUT08:proj_out.weight,OUT08:transformer_blocks.0.attn1.to_k.weight,OUT08:transformer_blocks.0.attn1.to_out.0.bias,OUT08:transformer_blocks.0.attn1.to_out.0.weight,OUT08:transformer_blocks.0.attn1.to_q.weight,OUT08:transformer_blocks.0.attn1.to_v.weight,OUT08:transformer_blocks.0.attn2.to_k.weight,OUT08:transformer_blocks.0.attn2.to_out.0.bias,OUT08:transformer_blocks.0.attn2.to_out.0.weight,OUT08:transformer_blocks.0.attn2.to_q.weight,OUT08:transformer_blocks.0.attn2.to_v.weight,OUT08:transformer_blocks.0.ff.net.0.proj.bias,OUT08:transformer_blocks.0.ff.net.0.proj.weight,OUT08:transformer_blocks.0.ff.net.2.bias,OUT08:transformer_blocks.0.ff.net.2.weight,OUT08:transformer_blocks.0.norm1.bias,OUT08:transformer_blocks.0.norm1.weight,OUT08:transformer_blocks.0.norm2.bias,OUT08:transformer_blocks.0.norm2.weight,OUT08:transformer_blocks.0.norm3.bias,OUT08:transformer_blocks.0.norm3.weight,OUT08:conv.bias,OUT08:conv.weight

|

||||||

|

|

||||||

|

OUT09:emb_layers.1.bias,OUT09:emb_layers.1.weight,OUT09:in_layers.0.bias,OUT09:in_layers.0.weight,OUT09:in_layers.2.bias,OUT09:in_layers.2.weight,OUT09:out_layers.0.bias,OUT09:out_layers.0.weight,OUT09:out_layers.3.bias,OUT09:out_layers.3.weight,OUT09:skip_connection.bias,OUT09:skip_connection.weight,OUT09:norm.bias,OUT09:norm.weight,OUT09:proj_in.bias,OUT09:proj_in.weight,OUT09:proj_out.bias,OUT09:proj_out.weight,OUT09:transformer_blocks.0.attn1.to_k.weight,OUT09:transformer_blocks.0.attn1.to_out.0.bias,OUT09:transformer_blocks.0.attn1.to_out.0.weight,OUT09:transformer_blocks.0.attn1.to_q.weight,OUT09:transformer_blocks.0.attn1.to_v.weight,OUT09:transformer_blocks.0.attn2.to_k.weight,OUT09:transformer_blocks.0.attn2.to_out.0.bias,OUT09:transformer_blocks.0.attn2.to_out.0.weight,OUT09:transformer_blocks.0.attn2.to_q.weight,OUT09:transformer_blocks.0.attn2.to_v.weight,OUT09:transformer_blocks.0.ff.net.0.proj.bias,OUT09:transformer_blocks.0.ff.net.0.proj.weight,OUT09:transformer_blocks.0.ff.net.2.bias,OUT09:transformer_blocks.0.ff.net.2.weight,OUT09:transformer_blocks.0.norm1.bias,OUT09:transformer_blocks.0.norm1.weight,OUT09:transformer_blocks.0.norm2.bias,OUT09:transformer_blocks.0.norm2.weight,OUT09:transformer_blocks.0.norm3.bias,OUT09:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

OUT10:emb_layers.1.bias,OUT10:emb_layers.1.weight,OUT10:in_layers.0.bias,OUT10:in_layers.0.weight,OUT10:in_layers.2.bias,OUT10:in_layers.2.weight,OUT10:out_layers.0.bias,OUT10:out_layers.0.weight,OUT10:out_layers.3.bias,OUT10:out_layers.3.weight,OUT10:skip_connection.bias,OUT10:skip_connection.weight,OUT10:norm.bias,OUT10:norm.weight,OUT10:proj_in.bias,OUT10:proj_in.weight,OUT10:proj_out.bias,OUT10:proj_out.weight,OUT10:transformer_blocks.0.attn1.to_k.weight,OUT10:transformer_blocks.0.attn1.to_out.0.bias,OUT10:transformer_blocks.0.attn1.to_out.0.weight,OUT10:transformer_blocks.0.attn1.to_q.weight,OUT10:transformer_blocks.0.attn1.to_v.weight,OUT10:transformer_blocks.0.attn2.to_k.weight,OUT10:transformer_blocks.0.attn2.to_out.0.bias,OUT10:transformer_blocks.0.attn2.to_out.0.weight,OUT10:transformer_blocks.0.attn2.to_q.weight,OUT10:transformer_blocks.0.attn2.to_v.weight,OUT10:transformer_blocks.0.ff.net.0.proj.bias,OUT10:transformer_blocks.0.ff.net.0.proj.weight,OUT10:transformer_blocks.0.ff.net.2.bias,OUT10:transformer_blocks.0.ff.net.2.weight,OUT10:transformer_blocks.0.norm1.bias,OUT10:transformer_blocks.0.norm1.weight,OUT10:transformer_blocks.0.norm2.bias,OUT10:transformer_blocks.0.norm2.weight,OUT10:transformer_blocks.0.norm3.bias,OUT10:transformer_blocks.0.norm3.weight

|

||||||

|

|

||||||

|

OUT11:emb_layers.1.bias,OUT11:emb_layers.1.weight,OUT11:in_layers.0.bias,OUT11:in_layers.0.weight,OUT11:in_layers.2.bias,OUT11:in_layers.2.weight,OUT11:out_layers.0.bias,OUT11:out_layers.0.weight,OUT11:out_layers.3.bias,OUT11:out_layers.3.weight,OUT11:skip_connection.bias,OUT11:skip_connection.weight,OUT11:norm.bias,OUT11:norm.weight,OUT11:proj_in.bias,OUT11:proj_in.weight,OUT11:proj_out.bias,OUT11:proj_out.weight,OUT11:transformer_blocks.0.attn1.to_k.weight,OUT11:transformer_blocks.0.attn1.to_out.0.bias,OUT11:transformer_blocks.0.attn1.to_out.0.weight,OUT11:transformer_blocks.0.attn1.to_q.weight,OUT11:transformer_blocks.0.attn1.to_v.weight,OUT11:transformer_blocks.0.attn2.to_k.weight,OUT11:transformer_blocks.0.attn2.to_out.0.bias,OUT11:transformer_blocks.0.attn2.to_out.0.weight,OUT11:transformer_blocks.0.attn2.to_q.weight,OUT11:transformer_blocks.0.attn2.to_v.weight,OUT11:transformer_blocks.0.ff.net.0.proj.bias,OUT11:transformer_blocks.0.ff.net.0.proj.weight,OUT11:transformer_blocks.0.ff.net.2.bias,OUT11:transformer_blocks.0.ff.net.2.weight,OUT11:transformer_blocks.0.norm1.bias,OUT11:transformer_blocks.0.norm1.weight,OUT11:transformer_blocks.0.norm2.bias,OUT11:transformer_blocks.0.norm2.weight,OUT11:transformer_blocks.0.norm3.bias,OUT11:transformer_blocks.0.norm3.weight,OUT11:0.bias,OUT11:0.weight,OUT11:2.bias,OUT11:2.weight

|

||||||

Loading…

Reference in New Issue