Initialisation

parent

83695a793b

commit

4e688d3068

|

|

@ -0,0 +1,16 @@

|

|||

.idea/*

|

||||

demo/*

|

||||

**/__pycache__/

|

||||

scripts/wav2lip/checkpoints/wav2lip_gan.pth

|

||||

scripts/wav2lip/checkpoints/wav2lip.pth

|

||||

scripts/wav2lip/checkpoints/visual_quality_disc_.pth

|

||||

scripts/wav2lip/checkpoints/lipsync_expert_.pth

|

||||

scripts/wav2lip/face_detection/detection/sfd/s3fd.pth

|

||||

scripts/wav2lip/predicator/shape_predictor_68_face_landmarks.dat

|

||||

scripts/wav2lip/output/debug/*.png

|

||||

scripts/wav2lip/output/final/*.png

|

||||

scripts/wav2lip/output/images/*.png

|

||||

scripts/wav2lip/output/masks/*.png

|

||||

scripts/wav2lip/output/*.mp4

|

||||

scripts/wav2lip/output/*.aac

|

||||

scripts/wav2lip/results/result_voice.mp4

|

||||

|

|

@ -0,0 +1,3 @@

|

|||

# Default ignored files

|

||||

/shelf/

|

||||

/workspace.xml

|

||||

|

|

@ -0,0 +1,21 @@

|

|||

<component name="InspectionProjectProfileManager">

|

||||

<profile version="1.0">

|

||||

<option name="myName" value="Project Default" />

|

||||

<inspection_tool class="PyCompatibilityInspection" enabled="true" level="WARNING" enabled_by_default="true">

|

||||

<option name="ourVersions">

|

||||

<value>

|

||||

<list size="1">

|

||||

<item index="0" class="java.lang.String" itemvalue="3.9" />

|

||||

</list>

|

||||

</value>

|

||||

</option>

|

||||

</inspection_tool>

|

||||

<inspection_tool class="PyPep8NamingInspection" enabled="true" level="WEAK WARNING" enabled_by_default="true">

|

||||

<option name="ignoredErrors">

|

||||

<list>

|

||||

<option value="N806" />

|

||||

</list>

|

||||

</option>

|

||||

</inspection_tool>

|

||||

</profile>

|

||||

</component>

|

||||

|

|

@ -0,0 +1,6 @@

|

|||

<component name="InspectionProjectProfileManager">

|

||||

<settings>

|

||||

<option name="USE_PROJECT_PROFILE" value="false" />

|

||||

<version value="1.0" />

|

||||

</settings>

|

||||

</component>

|

||||

|

|

@ -0,0 +1,8 @@

|

|||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<project version="4">

|

||||

<component name="ProjectModuleManager">

|

||||

<modules>

|

||||

<module fileurl="file://$PROJECT_DIR$/.idea/sd-wav2lip-uhq.iml" filepath="$PROJECT_DIR$/.idea/sd-wav2lip-uhq.iml" />

|

||||

</modules>

|

||||

</component>

|

||||

</project>

|

||||

|

|

@ -0,0 +1,12 @@

|

|||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<module type="PYTHON_MODULE" version="4">

|

||||

<component name="NewModuleRootManager">

|

||||

<content url="file://$MODULE_DIR$" />

|

||||

<orderEntry type="inheritedJdk" />

|

||||

<orderEntry type="sourceFolder" forTests="false" />

|

||||

</component>

|

||||

<component name="PyDocumentationSettings">

|

||||

<option name="format" value="PLAIN" />

|

||||

<option name="myDocStringFormat" value="Plain" />

|

||||

</component>

|

||||

</module>

|

||||

|

|

@ -0,0 +1,6 @@

|

|||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<project version="4">

|

||||

<component name="VcsDirectoryMappings">

|

||||

<mapping directory="$PROJECT_DIR$" vcs="Git" />

|

||||

</component>

|

||||

</project>

|

||||

|

|

@ -0,0 +1,29 @@

|

|||

# Contributing to sd-wav2lip-uhq

|

||||

|

||||

Thank you for your interest in contributing to sd-wav2lip-uhq! We appreciate your effort and to help us incorporate your contribution in the best way possible, please follow the following contribution guidelines.

|

||||

|

||||

## Reporting Bugs

|

||||

|

||||

If you find a bug in the project, we encourage you to report it. Here's how:

|

||||

|

||||

1. First, check the [existing Issues](url_of_issues) to see if the issue has already been reported. If it has, please add a comment to the existing issue rather than creating a new one.

|

||||

2. If you can't find an existing issue that matches your bug, create a new issue. Make sure to include as many details as possible so we can understand and reproduce the problem.

|

||||

|

||||

## Proposing Changes

|

||||

|

||||

We welcome code contributions from the community. Here's how to propose changes:

|

||||

|

||||

1. Fork this repository to your own GitHub account.

|

||||

2. Create a new branch on your fork for your changes.

|

||||

3. Make your changes in this branch.

|

||||

4. When you are ready, submit a pull request to the `main` branch of this repository.

|

||||

|

||||

Please note that we use the GitHub Flow workflow, so all pull requests should be made to the `main` branch.

|

||||

|

||||

Before submitting a pull request, please make sure your code adheres to the project's coding conventions and it has passed all tests. If you are adding features, please also add appropriate tests.

|

||||

|

||||

## Contact

|

||||

|

||||

If you have any questions or need help, please ping the developer via email at numzzz@hotmail.com to make sure your addition will fit well into such a large project and to get help if needed.

|

||||

|

||||

Thank you again for your contribution!

|

||||

|

|

@ -0,0 +1,202 @@

|

|||

|

||||

Apache License

|

||||

Version 2.0, January 2004

|

||||

http://www.apache.org/licenses/

|

||||

|

||||

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

||||

|

||||

1. Definitions.

|

||||

|

||||

"License" shall mean the terms and conditions for use, reproduction,

|

||||

and distribution as defined by Sections 1 through 9 of this document.

|

||||

|

||||

"Licensor" shall mean the copyright owner or entity authorized by

|

||||

the copyright owner that is granting the License.

|

||||

|

||||

"Legal Entity" shall mean the union of the acting entity and all

|

||||

other entities that control, are controlled by, or are under common

|

||||

control with that entity. For the purposes of this definition,

|

||||

"control" means (i) the power, direct or indirect, to cause the

|

||||

direction or management of such entity, whether by contract or

|

||||

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

||||

outstanding shares, or (iii) beneficial ownership of such entity.

|

||||

|

||||

"You" (or "Your") shall mean an individual or Legal Entity

|

||||

exercising permissions granted by this License.

|

||||

|

||||

"Source" form shall mean the preferred form for making modifications,

|

||||

including but not limited to software source code, documentation

|

||||

source, and configuration files.

|

||||

|

||||

"Object" form shall mean any form resulting from mechanical

|

||||

transformation or translation of a Source form, including but

|

||||

not limited to compiled object code, generated documentation,

|

||||

and conversions to other media types.

|

||||

|

||||

"Work" shall mean the work of authorship, whether in Source or

|

||||

Object form, made available under the License, as indicated by a

|

||||

copyright notice that is included in or attached to the work

|

||||

(an example is provided in the Appendix below).

|

||||

|

||||

"Derivative Works" shall mean any work, whether in Source or Object

|

||||

form, that is based on (or derived from) the Work and for which the

|

||||

editorial revisions, annotations, elaborations, or other modifications

|

||||

represent, as a whole, an original work of authorship. For the purposes

|

||||

of this License, Derivative Works shall not include works that remain

|

||||

separable from, or merely link (or bind by name) to the interfaces of,

|

||||

the Work and Derivative Works thereof.

|

||||

|

||||

"Contribution" shall mean any work of authorship, including

|

||||

the original version of the Work and any modifications or additions

|

||||

to that Work or Derivative Works thereof, that is intentionally

|

||||

submitted to Licensor for inclusion in the Work by the copyright owner

|

||||

or by an individual or Legal Entity authorized to submit on behalf of

|

||||

the copyright owner. For the purposes of this definition, "submitted"

|

||||

means any form of electronic, verbal, or written communication sent

|

||||

to the Licensor or its representatives, including but not limited to

|

||||

communication on electronic mailing lists, source code control systems,

|

||||

and issue tracking systems that are managed by, or on behalf of, the

|

||||

Licensor for the purpose of discussing and improving the Work, but

|

||||

excluding communication that is conspicuously marked or otherwise

|

||||

designated in writing by the copyright owner as "Not a Contribution."

|

||||

|

||||

"Contributor" shall mean Licensor and any individual or Legal Entity

|

||||

on behalf of whom a Contribution has been received by Licensor and

|

||||

subsequently incorporated within the Work.

|

||||

|

||||

2. Grant of Copyright License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

copyright license to reproduce, prepare Derivative Works of,

|

||||

publicly display, publicly perform, sublicense, and distribute the

|

||||

Work and such Derivative Works in Source or Object form.

|

||||

|

||||

3. Grant of Patent License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

(except as stated in this section) patent license to make, have made,

|

||||

use, offer to sell, sell, import, and otherwise transfer the Work,

|

||||

where such license applies only to those patent claims licensable

|

||||

by such Contributor that are necessarily infringed by their

|

||||

Contribution(s) alone or by combination of their Contribution(s)

|

||||

with the Work to which such Contribution(s) was submitted. If You

|

||||

institute patent litigation against any entity (including a

|

||||

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

||||

or a Contribution incorporated within the Work constitutes direct

|

||||

or contributory patent infringement, then any patent licenses

|

||||

granted to You under this License for that Work shall terminate

|

||||

as of the date such litigation is filed.

|

||||

|

||||

4. Redistribution. You may reproduce and distribute copies of the

|

||||

Work or Derivative Works thereof in any medium, with or without

|

||||

modifications, and in Source or Object form, provided that You

|

||||

meet the following conditions:

|

||||

|

||||

(a) You must give any other recipients of the Work or

|

||||

Derivative Works a copy of this License; and

|

||||

|

||||

(b) You must cause any modified files to carry prominent notices

|

||||

stating that You changed the files; and

|

||||

|

||||

(c) You must retain, in the Source form of any Derivative Works

|

||||

that You distribute, all copyright, patent, trademark, and

|

||||

attribution notices from the Source form of the Work,

|

||||

excluding those notices that do not pertain to any part of

|

||||

the Derivative Works; and

|

||||

|

||||

(d) If the Work includes a "NOTICE" text file as part of its

|

||||

distribution, then any Derivative Works that You distribute must

|

||||

include a readable copy of the attribution notices contained

|

||||

within such NOTICE file, excluding those notices that do not

|

||||

pertain to any part of the Derivative Works, in at least one

|

||||

of the following places: within a NOTICE text file distributed

|

||||

as part of the Derivative Works; within the Source form or

|

||||

documentation, if provided along with the Derivative Works; or,

|

||||

within a display generated by the Derivative Works, if and

|

||||

wherever such third-party notices normally appear. The contents

|

||||

of the NOTICE file are for informational purposes only and

|

||||

do not modify the License. You may add Your own attribution

|

||||

notices within Derivative Works that You distribute, alongside

|

||||

or as an addendum to the NOTICE text from the Work, provided

|

||||

that such additional attribution notices cannot be construed

|

||||

as modifying the License.

|

||||

|

||||

You may add Your own copyright statement to Your modifications and

|

||||

may provide additional or different license terms and conditions

|

||||

for use, reproduction, or distribution of Your modifications, or

|

||||

for any such Derivative Works as a whole, provided Your use,

|

||||

reproduction, and distribution of the Work otherwise complies with

|

||||

the conditions stated in this License.

|

||||

|

||||

5. Submission of Contributions. Unless You explicitly state otherwise,

|

||||

any Contribution intentionally submitted for inclusion in the Work

|

||||

by You to the Licensor shall be under the terms and conditions of

|

||||

this License, without any additional terms or conditions.

|

||||

Notwithstanding the above, nothing herein shall supersede or modify

|

||||

the terms of any separate license agreement you may have executed

|

||||

with Licensor regarding such Contributions.

|

||||

|

||||

6. Trademarks. This License does not grant permission to use the trade

|

||||

names, trademarks, service marks, or product names of the Licensor,

|

||||

except as required for reasonable and customary use in describing the

|

||||

origin of the Work and reproducing the content of the NOTICE file.

|

||||

|

||||

7. Disclaimer of Warranty. Unless required by applicable law or

|

||||

agreed to in writing, Licensor provides the Work (and each

|

||||

Contributor provides its Contributions) on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

||||

implied, including, without limitation, any warranties or conditions

|

||||

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

||||

PARTICULAR PURPOSE. You are solely responsible for determining the

|

||||

appropriateness of using or redistributing the Work and assume any

|

||||

risks associated with Your exercise of permissions under this License.

|

||||

|

||||

8. Limitation of Liability. In no event and under no legal theory,

|

||||

whether in tort (including negligence), contract, or otherwise,

|

||||

unless required by applicable law (such as deliberate and grossly

|

||||

negligent acts) or agreed to in writing, shall any Contributor be

|

||||

liable to You for damages, including any direct, indirect, special,

|

||||

incidental, or consequential damages of any character arising as a

|

||||

result of this License or out of the use or inability to use the

|

||||

Work (including but not limited to damages for loss of goodwill,

|

||||

work stoppage, computer failure or malfunction, or any and all

|

||||

other commercial damages or losses), even if such Contributor

|

||||

has been advised of the possibility of such damages.

|

||||

|

||||

9. Accepting Warranty or Additional Liability. While redistributing

|

||||

the Work or Derivative Works thereof, You may choose to offer,

|

||||

and charge a fee for, acceptance of support, warranty, indemnity,

|

||||

or other liability obligations and/or rights consistent with this

|

||||

License. However, in accepting such obligations, You may act only

|

||||

on Your own behalf and on Your sole responsibility, not on behalf

|

||||

of any other Contributor, and only if You agree to indemnify,

|

||||

defend, and hold each Contributor harmless for any liability

|

||||

incurred by, or claims asserted against, such Contributor by reason

|

||||

of your accepting any such warranty or additional liability.

|

||||

|

||||

END OF TERMS AND CONDITIONS

|

||||

|

||||

APPENDIX: How to apply the Apache License to your work.

|

||||

|

||||

To apply the Apache License to your work, attach the following

|

||||

boilerplate notice, with the fields enclosed by brackets "[]"

|

||||

replaced with your own identifying information. (Don't include

|

||||

the brackets!) The text should be enclosed in the appropriate

|

||||

comment syntax for the file format. We also recommend that a

|

||||

file or class name and description of purpose be included on the

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright 2023 NumZ

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

81

README.md

81

README.md

|

|

@ -1,2 +1,79 @@

|

|||

# sd-wav2lip-uhq

|

||||

Wav2Lip UHQ extension for Automatic1111

|

||||

# Wav2Lip UHQ Improvement extension for Stable diffusion webui automatic1111

|

||||

|

||||

|

||||

|

||||

|

||||

Result video can be find here : https://www.youtube.com/watch?v=-3WLUxz6XKM

|

||||

|

||||

https://user-images.githubusercontent.com/800903/258114796-c3962b79-b07e-4399-9046-665b9090ef6a.mp4

|

||||

|

||||

## Description

|

||||

This repository contains an extension for Automatic1111 designed to enhance videos generated by the [Wav2Lip tool](https://github.com/Rudrabha/Wav2Lip) with stable diffusion.

|

||||

It improves the quality of the lip-sync videos by applying specific post-processing techniques with Stable diffusion.

|

||||

|

||||

|

||||

|

||||

## Requirements

|

||||

- latest version of Stable diffusion webui automatic1111

|

||||

- FFmpeg

|

||||

|

||||

1. You can install Stable Diffusion Webui by following the instructions on the [Stable Diffusion Webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui) repository.

|

||||

2. FFmpeg : download it from the [official FFmpeg site](https://ffmpeg.org/download.html). Follow the instructions appropriate for your operating system, note ffmpeg have to be accessible from the command line.

|

||||

|

||||

## Installation

|

||||

|

||||

1. Launch automatic1111

|

||||

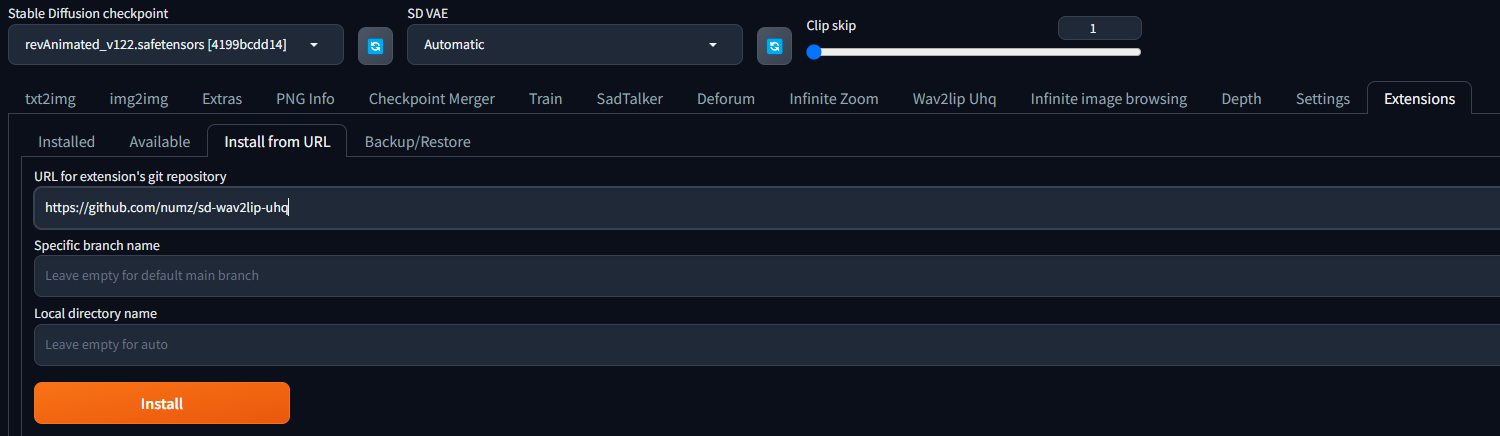

2. go to extensions tab enter the following url in the "Install from URL" field and click "Install":

|

||||

|

||||

|

||||

|

||||

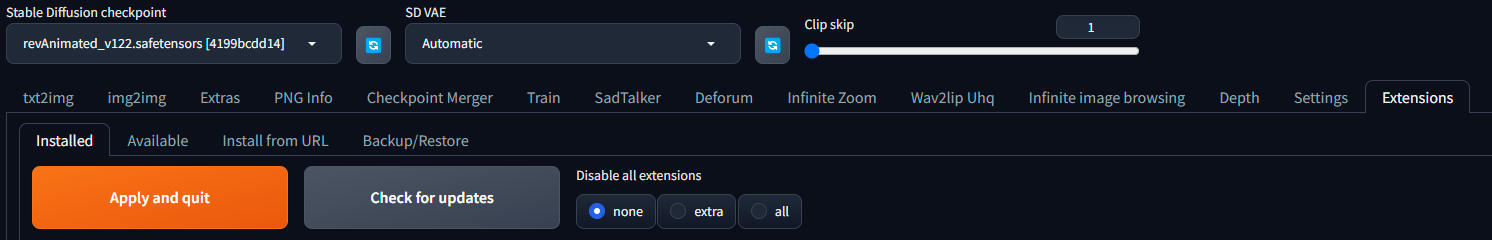

3. go to Installed Tab in extensions tab and click "Apply and quit"

|

||||

|

||||

|

||||

|

||||

5. Restart automatic1111

|

||||

|

||||

6. 🔥 Getting the weights IMPORTANT :

|

||||

|

||||

| Model | Description | Link to the model | install folder |

|

||||

|:-------------------:|:----------------------------------------------------------------------------------:| :---------------: |:------------------------------------------------------------------------------------------:|

|

||||

| Wav2Lip | Highly accurate lip-sync | [Link](https://iiitaphyd-my.sharepoint.com/:u:/g/personal/radrabha_m_research_iiit_ac_in/Eb3LEzbfuKlJiR600lQWRxgBIY27JZg80f7V9jtMfbNDaQ?e=TBFBVW) | extensions\sd-wav2lip-uhq\wav2lip\scripts\checkpoints\ |

|

||||

| Wav2Lip + GAN | Slightly inferior lip-sync, but better visual quality | [Link](https://iiitaphyd-my.sharepoint.com/:u:/g/personal/radrabha_m_research_iiit_ac_in/EdjI7bZlgApMqsVoEUUXpLsBxqXbn5z8VTmoxp55YNDcIA?e=n9ljGW) | extensions\sd-wav2lip-uhq\wav2lip\scripts\checkpoints\ |

|

||||

| s3fd | Face Detection pre trained model | [Link](ttps://www.adrianbulat.com/downloads/python-fan/s3fd-619a316812.pth) | extensions\sd-wav2lip-uhq\scripts\wav2lip\face_detection\detection\sfd\s3fd.pth |

|

||||

| s3fd | Face Detection pre trained model (alternate link) | [Link](https://iiitaphyd-my.sharepoint.com/:u:/g/personal/prajwal_k_research_iiit_ac_in/EZsy6qWuivtDnANIG73iHjIBjMSoojcIV0NULXV-yiuiIg?e=qTasa8) | extensions\sd-wav2lip-uhq\scripts\wav2lip\face_detection\detection\sfd\s3fd.pth |

|

||||

| landmark predicator | Dlib 68 point face landmark prediction | [Link](https://huggingface.co/spaces/asdasdasdasd/Face-forgery-detection/resolve/ccfc24642e0210d4d885bc7b3dbc9a68ed948ad6/shape_predictor_68_face_landmarks.dat) | extensions\sd-wav2lip-uhq\scripts\wav2lip\predicator\shape_predictor_68_face_landmarks.dat |

|

||||

| landmark predicator | Dlib 68 point face landmark prediction (alternate link click on the download icon) | [Link](https://github.com/italojs/facial-landmarks-recognition/blob/master/shape_predictor_68_face_landmarks.dat) | extensions\sd-wav2lip-uhq\scripts\wav2lip\predicator\shape_predictor_68_face_landmarks.dat |

|

||||

|

||||

|

||||

## Usage

|

||||

1. Choose a video or an image

|

||||

2. Choose an audio file with speech

|

||||

3. choose a checkpoint (see table above)

|

||||

4. **Padding** is use by wav2lip to add a black border around the mouth, it's usefull to avoid the mouth to be cropped by the face detection. you can change the padding value to your needs but default value give good results.

|

||||

5. **No Smooth**: if checked, the mouth will not be smoothed, it can be usefull if you want to keep the original mouth shape.

|

||||

6. **Resize Factor**: resize factor for the video, default value is 1.0, you can change it to your needs. Usefull if video size is too big.

|

||||

7. Choose a good Stable diffusion checkpoint, like [delibarate_v2](https://civitai.com/models/4823/deliberate) or [revAnimated_v122](https://civitai.com/models/7371) (SDXL models seems doesn't work).

|

||||

8. Click on "Generate" button

|

||||

|

||||

## Behind the scenes

|

||||

|

||||

This extension operates in several stages to improve the quality of Wav2Lip-generated videos:

|

||||

|

||||

1. **Generate a Wav2lip video**: The script first generates a low-quality Wav2Lip video using the input video and audio.

|

||||

2. **Mask Creation**: The script creates a mask around the mouth and try to keep other face motion like cheeks and chin.

|

||||

3. **Video Quality Enhancement**: It takes the low-quality Wav2Lip video and overlays the low-quality mouth onto the high-quality original video.

|

||||

4. **Img2Img**: The script then sends the original image with the low-quality mouth and the mouth mask into Img2Img.

|

||||

|

||||

## Quality tips

|

||||

- use a high quality video as input

|

||||

- use a high quality model in stable diffusion webui like [delibarate_v2](https://civitai.com/models/4823/deliberate) or [revAnimated_v122](https://civitai.com/models/7371)

|

||||

|

||||

## Contributing

|

||||

|

||||

Contributions to this project are welcome. Please ensure any pull requests are accompanied by a detailed description of the changes made.

|

||||

|

||||

## License

|

||||

* The code in this repository is released under the MIT license as found in the [LICENSE file](LICENSE).

|

||||

* The models weights in this repository are released under the CC-BY-NC 4.0 license as found in the [LICENSE_weights file](LICENSE_weights).

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -0,0 +1,10 @@

|

|||

import launch

|

||||

import os

|

||||

|

||||

req_file = os.path.join(os.path.dirname(os.path.realpath(__file__)), "requirements.txt")

|

||||

|

||||

with open(req_file) as file:

|

||||

for lib in file:

|

||||

lib = lib.strip()

|

||||

if not launch.is_installed(lib):

|

||||

launch.run_pip(f"install {lib}", f"wav2lip_uhq requirement: {lib}")

|

||||

|

|

@ -0,0 +1,12 @@

|

|||

imutils

|

||||

dlib

|

||||

numpy

|

||||

opencv-python

|

||||

scipy

|

||||

requests

|

||||

pillow

|

||||

librosa

|

||||

opencv-contrib-python

|

||||

tqdm

|

||||

numba

|

||||

imutils

|

||||

|

|

@ -0,0 +1,55 @@

|

|||

from scripts.wav2lip_uhq_extend_paths import wav2lip_uhq_sys_extend

|

||||

import gradio as gr

|

||||

from scripts.wav2lip.w2l import W2l

|

||||

from scripts.wav2lip.wav2lip_uhq import Wav2LipUHQ

|

||||

from modules.shared import opts, state

|

||||

|

||||

def on_ui_tabs():

|

||||

wav2lip_uhq_sys_extend()

|

||||

|

||||

with gr.Blocks(analytics_enabled=False) as wav2lip_uhq_interface:

|

||||

with gr.Row():

|

||||

video = gr.File(label="Video or Image", info="Filepath of video/image that contains faces to use")

|

||||

audio = gr.File(label="Audio", info="Filepath of video/audio file to use as raw audio source")

|

||||

with gr.Column():

|

||||

checkpoint = gr.Radio(["wav2lip", "wav2lip_gan"], value="wav2lip_gan", label="Checkpoint", info="Name of saved checkpoint to load weights from")

|

||||

no_smooth = gr.Checkbox(label="No Smooth", info="Prevent smoothing face detections over a short temporal window")

|

||||

resize_factor = gr.Slider(minimum=1, maximum=4, step=1, label="Resize Factor", info="Reduce the resolution by this factor. Sometimes, best results are obtained at 480p or 720p")

|

||||

generate_btn = gr.Button("Generate")

|

||||

interrupt_btn = gr.Button('Interrupt', elem_id=f"interrupt", visible=True)

|

||||

|

||||

with gr.Row():

|

||||

with gr.Column():

|

||||

pad_top = gr.Slider(minimum=0, maximum=50, step=1, value=0, label="Pad Top", info="Padding above lips")

|

||||

pad_bottom = gr.Slider(minimum=0, maximum=50, step=1, value=0, label="Pad Bottom", info="Padding below lips")

|

||||

pad_left = gr.Slider(minimum=0, maximum=50, step=1, value=0, label="Pad Left", info="Padding to the left of lips")

|

||||

pad_right = gr.Slider(minimum=0, maximum=50, step=1, value=0, label="Pad Right", info="Padding to the right of lips")

|

||||

|

||||

with gr.Column():

|

||||

with gr.Tabs(elem_id="wav2lip_generated"):

|

||||

result = gr.Video(label="Generated video", format="mp4").style(width=256)

|

||||

|

||||

|

||||

def on_interrupt():

|

||||

state.interrupt()

|

||||

return "Interrupted"

|

||||

|

||||

def generate(video, audio, checkpoint, no_smooth, resize_factor, pad_top, pad_bottom, pad_left, pad_right):

|

||||

state.begin()

|

||||

if video is None or audio is None or checkpoint is None:

|

||||

return

|

||||

w2l = W2l(video.name, audio.name, checkpoint, no_smooth, resize_factor, pad_top, pad_bottom, pad_left, pad_right)

|

||||

w2l.execute()

|

||||

|

||||

w2luhq = Wav2LipUHQ(video.name, audio.name)

|

||||

w2luhq.execute()

|

||||

return "extensions\\sd-wav2lip-uhq\\scripts\\wav2lip\\output\\output_video.mp4"

|

||||

|

||||

generate_btn.click(

|

||||

generate,

|

||||

[video, audio, checkpoint, no_smooth, resize_factor, pad_top, pad_bottom, pad_left, pad_right],

|

||||

result)

|

||||

|

||||

interrupt_btn.click(on_interrupt)

|

||||

|

||||

return [(wav2lip_uhq_interface, "Wav2lip Uhq", "wav2lip_uhq_interface")]

|

||||

|

|

@ -0,0 +1,136 @@

|

|||

import librosa

|

||||

import librosa.filters

|

||||

import numpy as np

|

||||

# import tensorflow as tf

|

||||

from scipy import signal

|

||||

from scipy.io import wavfile

|

||||

from scripts.wav2lip.hparams import hparams as hp

|

||||

|

||||

def load_wav(path, sr):

|

||||

return librosa.core.load(path, sr=sr)[0]

|

||||

|

||||

def save_wav(wav, path, sr):

|

||||

wav *= 32767 / max(0.01, np.max(np.abs(wav)))

|

||||

#proposed by @dsmiller

|

||||

wavfile.write(path, sr, wav.astype(np.int16))

|

||||

|

||||

def save_wavenet_wav(wav, path, sr):

|

||||

librosa.output.write_wav(path, wav, sr=sr)

|

||||

|

||||

def preemphasis(wav, k, preemphasize=True):

|

||||

if preemphasize:

|

||||

return signal.lfilter([1, -k], [1], wav)

|

||||

return wav

|

||||

|

||||

def inv_preemphasis(wav, k, inv_preemphasize=True):

|

||||

if inv_preemphasize:

|

||||

return signal.lfilter([1], [1, -k], wav)

|

||||

return wav

|

||||

|

||||

def get_hop_size():

|

||||

hop_size = hp.hop_size

|

||||

if hop_size is None:

|

||||

assert hp.frame_shift_ms is not None

|

||||

hop_size = int(hp.frame_shift_ms / 1000 * hp.sample_rate)

|

||||

return hop_size

|

||||

|

||||

def linearspectrogram(wav):

|

||||

D = _stft(preemphasis(wav, hp.preemphasis, hp.preemphasize))

|

||||

S = _amp_to_db(np.abs(D)) - hp.ref_level_db

|

||||

|

||||

if hp.signal_normalization:

|

||||

return _normalize(S)

|

||||

return S

|

||||

|

||||

def melspectrogram(wav):

|

||||

D = _stft(preemphasis(wav, hp.preemphasis, hp.preemphasize))

|

||||

S = _amp_to_db(_linear_to_mel(np.abs(D))) - hp.ref_level_db

|

||||

|

||||

if hp.signal_normalization:

|

||||

return _normalize(S)

|

||||

return S

|

||||

|

||||

def _lws_processor():

|

||||

import lws

|

||||

return lws.lws(hp.n_fft, get_hop_size(), fftsize=hp.win_size, mode="speech")

|

||||

|

||||

def _stft(y):

|

||||

if hp.use_lws:

|

||||

return _lws_processor(hp).stft(y).T

|

||||

else:

|

||||

return librosa.stft(y=y, n_fft=hp.n_fft, hop_length=get_hop_size(), win_length=hp.win_size)

|

||||

|

||||

##########################################################

|

||||

#Those are only correct when using lws!!! (This was messing with Wavenet quality for a long time!)

|

||||

def num_frames(length, fsize, fshift):

|

||||

"""Compute number of time frames of spectrogram

|

||||

"""

|

||||

pad = (fsize - fshift)

|

||||

if length % fshift == 0:

|

||||

M = (length + pad * 2 - fsize) // fshift + 1

|

||||

else:

|

||||

M = (length + pad * 2 - fsize) // fshift + 2

|

||||

return M

|

||||

|

||||

|

||||

def pad_lr(x, fsize, fshift):

|

||||

"""Compute left and right padding

|

||||

"""

|

||||

M = num_frames(len(x), fsize, fshift)

|

||||

pad = (fsize - fshift)

|

||||

T = len(x) + 2 * pad

|

||||

r = (M - 1) * fshift + fsize - T

|

||||

return pad, pad + r

|

||||

##########################################################

|

||||

#Librosa correct padding

|

||||

def librosa_pad_lr(x, fsize, fshift):

|

||||

return 0, (x.shape[0] // fshift + 1) * fshift - x.shape[0]

|

||||

|

||||

# Conversions

|

||||

_mel_basis = None

|

||||

|

||||

def _linear_to_mel(spectogram):

|

||||

global _mel_basis

|

||||

if _mel_basis is None:

|

||||

_mel_basis = _build_mel_basis()

|

||||

return np.dot(_mel_basis, spectogram)

|

||||

|

||||

def _build_mel_basis():

|

||||

assert hp.fmax <= hp.sample_rate // 2

|

||||

return librosa.filters.mel(hp.sample_rate, hp.n_fft, n_mels=hp.num_mels,

|

||||

fmin=hp.fmin, fmax=hp.fmax)

|

||||

|

||||

def _amp_to_db(x):

|

||||

min_level = np.exp(hp.min_level_db / 20 * np.log(10))

|

||||

return 20 * np.log10(np.maximum(min_level, x))

|

||||

|

||||

def _db_to_amp(x):

|

||||

return np.power(10.0, (x) * 0.05)

|

||||

|

||||

def _normalize(S):

|

||||

if hp.allow_clipping_in_normalization:

|

||||

if hp.symmetric_mels:

|

||||

return np.clip((2 * hp.max_abs_value) * ((S - hp.min_level_db) / (-hp.min_level_db)) - hp.max_abs_value,

|

||||

-hp.max_abs_value, hp.max_abs_value)

|

||||

else:

|

||||

return np.clip(hp.max_abs_value * ((S - hp.min_level_db) / (-hp.min_level_db)), 0, hp.max_abs_value)

|

||||

|

||||

assert S.max() <= 0 and S.min() - hp.min_level_db >= 0

|

||||

if hp.symmetric_mels:

|

||||

return (2 * hp.max_abs_value) * ((S - hp.min_level_db) / (-hp.min_level_db)) - hp.max_abs_value

|

||||

else:

|

||||

return hp.max_abs_value * ((S - hp.min_level_db) / (-hp.min_level_db))

|

||||

|

||||

def _denormalize(D):

|

||||

if hp.allow_clipping_in_normalization:

|

||||

if hp.symmetric_mels:

|

||||

return (((np.clip(D, -hp.max_abs_value,

|

||||

hp.max_abs_value) + hp.max_abs_value) * -hp.min_level_db / (2 * hp.max_abs_value))

|

||||

+ hp.min_level_db)

|

||||

else:

|

||||

return ((np.clip(D, 0, hp.max_abs_value) * -hp.min_level_db / hp.max_abs_value) + hp.min_level_db)

|

||||

|

||||

if hp.symmetric_mels:

|

||||

return (((D + hp.max_abs_value) * -hp.min_level_db / (2 * hp.max_abs_value)) + hp.min_level_db)

|

||||

else:

|

||||

return ((D * -hp.min_level_db / hp.max_abs_value) + hp.min_level_db)

|

||||

|

|

@ -0,0 +1 @@

|

|||

Place all your checkpoints (.pth files) here.

|

||||

|

|

@ -0,0 +1 @@

|

|||

The code for Face Detection in this folder has been taken from the wonderful [face_alignment](https://github.com/1adrianb/face-alignment) repository. This has been modified to take batches of faces at a time.

|

||||

|

|

@ -0,0 +1,7 @@

|

|||

# -*- coding: utf-8 -*-

|

||||

|

||||

__author__ = """Adrian Bulat"""

|

||||

__email__ = 'adrian.bulat@nottingham.ac.uk'

|

||||

__version__ = '1.0.1'

|

||||

|

||||

from .api import FaceAlignment, LandmarksType, NetworkSize

|

||||

|

|

@ -0,0 +1,79 @@

|

|||

from __future__ import print_function

|

||||

import os

|

||||

import torch

|

||||

from torch.utils.model_zoo import load_url

|

||||

from enum import Enum

|

||||

import numpy as np

|

||||

import cv2

|

||||

try:

|

||||

import urllib.request as request_file

|

||||

except BaseException:

|

||||

import urllib as request_file

|

||||

|

||||

from .models import FAN, ResNetDepth

|

||||

from .utils import *

|

||||

|

||||

|

||||

class LandmarksType(Enum):

|

||||

"""Enum class defining the type of landmarks to detect.

|

||||

|

||||

``_2D`` - the detected points ``(x,y)`` are detected in a 2D space and follow the visible contour of the face

|

||||

``_2halfD`` - this points represent the projection of the 3D points into 3D

|

||||

``_3D`` - detect the points ``(x,y,z)``` in a 3D space

|

||||

|

||||

"""

|

||||

_2D = 1

|

||||

_2halfD = 2

|

||||

_3D = 3

|

||||

|

||||

|

||||

class NetworkSize(Enum):

|

||||

# TINY = 1

|

||||

# SMALL = 2

|

||||

# MEDIUM = 3

|

||||

LARGE = 4

|

||||

|

||||

def __new__(cls, value):

|

||||

member = object.__new__(cls)

|

||||

member._value_ = value

|

||||

return member

|

||||

|

||||

def __int__(self):

|

||||

return self.value

|

||||

|

||||

ROOT = os.path.dirname(os.path.abspath(__file__))

|

||||

|

||||

class FaceAlignment:

|

||||

def __init__(self, landmarks_type, network_size=NetworkSize.LARGE,

|

||||

device='cuda', flip_input=False, face_detector='sfd', verbose=False):

|

||||

self.device = device

|

||||

self.flip_input = flip_input

|

||||

self.landmarks_type = landmarks_type

|

||||

self.verbose = verbose

|

||||

|

||||

network_size = int(network_size)

|

||||

|

||||

if 'cuda' in device:

|

||||

torch.backends.cudnn.benchmark = True

|

||||

|

||||

# Get the face detector

|

||||

face_detector_module = __import__('scripts.wav2lip.face_detection.detection.' + face_detector,

|

||||

globals(), locals(), [face_detector], 0)

|

||||

self.face_detector = face_detector_module.FaceDetector(device=device, verbose=verbose)

|

||||

|

||||

def get_detections_for_batch(self, images):

|

||||

images = images[..., ::-1]

|

||||

detected_faces = self.face_detector.detect_from_batch(images.copy())

|

||||

results = []

|

||||

|

||||

for i, d in enumerate(detected_faces):

|

||||

if len(d) == 0:

|

||||

results.append(None)

|

||||

continue

|

||||

d = d[0]

|

||||

d = np.clip(d, 0, None)

|

||||

|

||||

x1, y1, x2, y2 = map(int, d[:-1])

|

||||

results.append((x1, y1, x2, y2))

|

||||

|

||||

return results

|

||||

|

|

@ -0,0 +1 @@

|

|||

from .core import FaceDetector

|

||||

|

|

@ -0,0 +1,130 @@

|

|||

import logging

|

||||

import glob

|

||||

from tqdm import tqdm

|

||||

import numpy as np

|

||||

import torch

|

||||

import cv2

|

||||

|

||||

|

||||

class FaceDetector(object):

|

||||

"""An abstract class representing a face detector.

|

||||

|

||||

Any other face detection implementation must subclass it. All subclasses

|

||||

must implement ``detect_from_image``, that return a list of detected

|

||||

bounding boxes. Optionally, for speed considerations detect from path is

|

||||

recommended.

|

||||

"""

|

||||

|

||||

def __init__(self, device, verbose):

|

||||

self.device = device

|

||||

self.verbose = verbose

|

||||

|

||||

if verbose:

|

||||

if 'cpu' in device:

|

||||

logger = logging.getLogger(__name__)

|

||||

logger.warning("Detection running on CPU, this may be potentially slow.")

|

||||

|

||||

if 'cpu' not in device and 'cuda' not in device:

|

||||

if verbose:

|

||||

logger.error("Expected values for device are: {cpu, cuda} but got: %s", device)

|

||||

raise ValueError

|

||||

|

||||

def detect_from_image(self, tensor_or_path):

|

||||

"""Detects faces in a given image.

|

||||

|

||||

This function detects the faces present in a provided BGR(usually)

|

||||

image. The input can be either the image itself or the path to it.

|

||||

|

||||

Arguments:

|

||||

tensor_or_path {numpy.ndarray, torch.tensor or string} -- the path

|

||||

to an image or the image itself.

|

||||

|

||||

Example::

|

||||

|

||||

>>> path_to_image = 'data/image_01.jpg'

|

||||

... detected_faces = detect_from_image(path_to_image)

|

||||

[A list of bounding boxes (x1, y1, x2, y2)]

|

||||

>>> image = cv2.imread(path_to_image)

|

||||

... detected_faces = detect_from_image(image)

|

||||

[A list of bounding boxes (x1, y1, x2, y2)]

|

||||

|

||||

"""

|

||||

raise NotImplementedError

|

||||

|

||||

def detect_from_directory(self, path, extensions=['.jpg', '.png'], recursive=False, show_progress_bar=True):

|

||||

"""Detects faces from all the images present in a given directory.

|

||||

|

||||

Arguments:

|

||||

path {string} -- a string containing a path that points to the folder containing the images

|

||||

|

||||

Keyword Arguments:

|

||||

extensions {list} -- list of string containing the extensions to be

|

||||

consider in the following format: ``.extension_name`` (default:

|

||||

{['.jpg', '.png']}) recursive {bool} -- option wherever to scan the

|

||||

folder recursively (default: {False}) show_progress_bar {bool} --

|

||||

display a progressbar (default: {True})

|

||||

|

||||

Example:

|

||||

>>> directory = 'data'

|

||||

... detected_faces = detect_from_directory(directory)

|

||||

{A dictionary of [lists containing bounding boxes(x1, y1, x2, y2)]}

|

||||

|

||||

"""

|

||||

if self.verbose:

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

if len(extensions) == 0:

|

||||

if self.verbose:

|

||||

logger.error("Expected at list one extension, but none was received.")

|

||||

raise ValueError

|

||||

|

||||

if self.verbose:

|

||||

logger.info("Constructing the list of images.")

|

||||

additional_pattern = '/**/*' if recursive else '/*'

|

||||

files = []

|

||||

for extension in extensions:

|

||||

files.extend(glob.glob(path + additional_pattern + extension, recursive=recursive))

|

||||

|

||||

if self.verbose:

|

||||

logger.info("Finished searching for images. %s images found", len(files))

|

||||

logger.info("Preparing to run the detection.")

|

||||

|

||||

predictions = {}

|

||||

for image_path in tqdm(files, disable=not show_progress_bar):

|

||||

if self.verbose:

|

||||

logger.info("Running the face detector on image: %s", image_path)

|

||||

predictions[image_path] = self.detect_from_image(image_path)

|

||||

|

||||

if self.verbose:

|

||||

logger.info("The detector was successfully run on all %s images", len(files))

|

||||

|

||||

return predictions

|

||||

|

||||

@property

|

||||

def reference_scale(self):

|

||||

raise NotImplementedError

|

||||

|

||||

@property

|

||||

def reference_x_shift(self):

|

||||

raise NotImplementedError

|

||||

|

||||

@property

|

||||

def reference_y_shift(self):

|

||||

raise NotImplementedError

|

||||

|

||||

@staticmethod

|

||||

def tensor_or_path_to_ndarray(tensor_or_path, rgb=True):

|

||||

"""Convert path (represented as a string) or torch.tensor to a numpy.ndarray

|

||||

|

||||

Arguments:

|

||||

tensor_or_path {numpy.ndarray, torch.tensor or string} -- path to the image, or the image itself

|

||||

"""

|

||||

if isinstance(tensor_or_path, str):

|

||||

return cv2.imread(tensor_or_path) if not rgb else cv2.imread(tensor_or_path)[..., ::-1]

|

||||

elif torch.is_tensor(tensor_or_path):

|

||||

# Call cpu in case its coming from cuda

|

||||

return tensor_or_path.cpu().numpy()[..., ::-1].copy() if not rgb else tensor_or_path.cpu().numpy()

|

||||

elif isinstance(tensor_or_path, np.ndarray):

|

||||

return tensor_or_path[..., ::-1].copy() if not rgb else tensor_or_path

|

||||

else:

|

||||

raise TypeError

|

||||

|

|

@ -0,0 +1 @@

|

|||

from .sfd_detector import SFDDetector as FaceDetector

|

||||

|

|

@ -0,0 +1,129 @@

|

|||

from __future__ import print_function

|

||||

import os

|

||||

import sys

|

||||

import cv2

|

||||

import random

|

||||

import datetime

|

||||

import time

|

||||

import math

|

||||

import argparse

|

||||

import numpy as np

|

||||

import torch

|

||||

|

||||

try:

|

||||

from iou import IOU

|

||||

except BaseException:

|

||||

# IOU cython speedup 10x

|

||||

def IOU(ax1, ay1, ax2, ay2, bx1, by1, bx2, by2):

|

||||

sa = abs((ax2 - ax1) * (ay2 - ay1))

|

||||

sb = abs((bx2 - bx1) * (by2 - by1))

|

||||

x1, y1 = max(ax1, bx1), max(ay1, by1)

|

||||

x2, y2 = min(ax2, bx2), min(ay2, by2)

|

||||

w = x2 - x1

|

||||

h = y2 - y1

|

||||

if w < 0 or h < 0:

|

||||

return 0.0

|

||||

else:

|

||||

return 1.0 * w * h / (sa + sb - w * h)

|

||||

|

||||

|

||||

def bboxlog(x1, y1, x2, y2, axc, ayc, aww, ahh):

|

||||

xc, yc, ww, hh = (x2 + x1) / 2, (y2 + y1) / 2, x2 - x1, y2 - y1

|

||||

dx, dy = (xc - axc) / aww, (yc - ayc) / ahh

|

||||

dw, dh = math.log(ww / aww), math.log(hh / ahh)

|

||||

return dx, dy, dw, dh

|

||||

|

||||

|

||||

def bboxloginv(dx, dy, dw, dh, axc, ayc, aww, ahh):

|

||||

xc, yc = dx * aww + axc, dy * ahh + ayc

|

||||

ww, hh = math.exp(dw) * aww, math.exp(dh) * ahh

|

||||

x1, x2, y1, y2 = xc - ww / 2, xc + ww / 2, yc - hh / 2, yc + hh / 2

|

||||

return x1, y1, x2, y2

|

||||

|

||||

|

||||

def nms(dets, thresh):

|

||||

if 0 == len(dets):

|

||||

return []

|

||||

x1, y1, x2, y2, scores = dets[:, 0], dets[:, 1], dets[:, 2], dets[:, 3], dets[:, 4]

|

||||

areas = (x2 - x1 + 1) * (y2 - y1 + 1)

|

||||

order = scores.argsort()[::-1]

|

||||

|

||||

keep = []

|

||||

while order.size > 0:

|

||||

i = order[0]

|

||||

keep.append(i)

|

||||

xx1, yy1 = np.maximum(x1[i], x1[order[1:]]), np.maximum(y1[i], y1[order[1:]])

|

||||

xx2, yy2 = np.minimum(x2[i], x2[order[1:]]), np.minimum(y2[i], y2[order[1:]])

|

||||

|

||||

w, h = np.maximum(0.0, xx2 - xx1 + 1), np.maximum(0.0, yy2 - yy1 + 1)

|

||||

ovr = w * h / (areas[i] + areas[order[1:]] - w * h)

|

||||

|

||||

inds = np.where(ovr <= thresh)[0]

|

||||

order = order[inds + 1]

|

||||

|

||||

return keep

|

||||

|

||||

|

||||

def encode(matched, priors, variances):

|

||||

"""Encode the variances from the priorbox layers into the ground truth boxes

|

||||

we have matched (based on jaccard overlap) with the prior boxes.

|

||||

Args:

|

||||

matched: (tensor) Coords of ground truth for each prior in point-form

|

||||

Shape: [num_priors, 4].

|

||||

priors: (tensor) Prior boxes in center-offset form

|

||||

Shape: [num_priors,4].

|

||||

variances: (list[float]) Variances of priorboxes

|

||||

Return:

|

||||

encoded boxes (tensor), Shape: [num_priors, 4]

|

||||

"""

|

||||

|

||||

# dist b/t match center and prior's center

|

||||

g_cxcy = (matched[:, :2] + matched[:, 2:]) / 2 - priors[:, :2]

|

||||

# encode variance

|

||||

g_cxcy /= (variances[0] * priors[:, 2:])

|

||||

# match wh / prior wh

|

||||

g_wh = (matched[:, 2:] - matched[:, :2]) / priors[:, 2:]

|

||||

g_wh = torch.log(g_wh) / variances[1]

|

||||

# return target for smooth_l1_loss

|

||||

return torch.cat([g_cxcy, g_wh], 1) # [num_priors,4]

|

||||

|

||||

|

||||

def decode(loc, priors, variances):

|

||||

"""Decode locations from predictions using priors to undo

|

||||

the encoding we did for offset regression at train time.

|

||||

Args:

|

||||

loc (tensor): location predictions for loc layers,

|

||||

Shape: [num_priors,4]

|

||||

priors (tensor): Prior boxes in center-offset form.

|

||||

Shape: [num_priors,4].

|

||||

variances: (list[float]) Variances of priorboxes

|

||||

Return:

|

||||

decoded bounding box predictions

|

||||

"""

|

||||

|

||||

boxes = torch.cat((

|

||||

priors[:, :2] + loc[:, :2] * variances[0] * priors[:, 2:],

|

||||

priors[:, 2:] * torch.exp(loc[:, 2:] * variances[1])), 1)

|

||||

boxes[:, :2] -= boxes[:, 2:] / 2

|

||||

boxes[:, 2:] += boxes[:, :2]

|

||||

return boxes

|

||||

|

||||

def batch_decode(loc, priors, variances):

|

||||

"""Decode locations from predictions using priors to undo

|

||||

the encoding we did for offset regression at train time.

|

||||

Args:

|

||||

loc (tensor): location predictions for loc layers,

|

||||

Shape: [num_priors,4]

|

||||

priors (tensor): Prior boxes in center-offset form.

|

||||

Shape: [num_priors,4].

|

||||

variances: (list[float]) Variances of priorboxes

|

||||

Return:

|

||||

decoded bounding box predictions

|

||||

"""

|

||||

|

||||

boxes = torch.cat((

|

||||

priors[:, :, :2] + loc[:, :, :2] * variances[0] * priors[:, :, 2:],

|

||||

priors[:, :, 2:] * torch.exp(loc[:, :, 2:] * variances[1])), 2)

|

||||

boxes[:, :, :2] -= boxes[:, :, 2:] / 2

|

||||

boxes[:, :, 2:] += boxes[:, :, :2]

|

||||

return boxes

|

||||

|

|

@ -0,0 +1,112 @@

|

|||

import torch

|

||||

import torch.nn.functional as F

|

||||

|

||||

import os

|

||||

import sys

|

||||

import cv2

|

||||

import random

|

||||

import datetime

|

||||

import math

|

||||

import argparse

|

||||

import numpy as np

|

||||

|

||||

import scipy.io as sio

|

||||

import zipfile

|

||||

from .net_s3fd import s3fd

|

||||

from .bbox import *

|

||||

|

||||

|

||||

def detect(net, img, device):

|

||||

img = img - np.array([104, 117, 123])

|

||||

img = img.transpose(2, 0, 1)

|

||||

img = img.reshape((1,) + img.shape)

|

||||

|

||||

if 'cuda' in device:

|

||||

torch.backends.cudnn.benchmark = True

|

||||

|

||||

img = torch.from_numpy(img).float().to(device)

|

||||

BB, CC, HH, WW = img.size()

|

||||

with torch.no_grad():

|

||||

olist = net(img)

|

||||

|

||||

bboxlist = []

|

||||

for i in range(len(olist) // 2):

|

||||

olist[i * 2] = F.softmax(olist[i * 2], dim=1)

|

||||

olist = [oelem.data.cpu() for oelem in olist]

|

||||

for i in range(len(olist) // 2):

|

||||

ocls, oreg = olist[i * 2], olist[i * 2 + 1]

|

||||

FB, FC, FH, FW = ocls.size() # feature map size

|

||||

stride = 2**(i + 2) # 4,8,16,32,64,128

|

||||

anchor = stride * 4

|

||||

poss = zip(*np.where(ocls[:, 1, :, :] > 0.05))

|

||||

for Iindex, hindex, windex in poss:

|

||||

axc, ayc = stride / 2 + windex * stride, stride / 2 + hindex * stride

|

||||

score = ocls[0, 1, hindex, windex]

|

||||

loc = oreg[0, :, hindex, windex].contiguous().view(1, 4)

|

||||

priors = torch.Tensor([[axc / 1.0, ayc / 1.0, stride * 4 / 1.0, stride * 4 / 1.0]])

|

||||

variances = [0.1, 0.2]

|

||||

box = decode(loc, priors, variances)

|

||||

x1, y1, x2, y2 = box[0] * 1.0

|

||||

# cv2.rectangle(imgshow,(int(x1),int(y1)),(int(x2),int(y2)),(0,0,255),1)

|

||||

bboxlist.append([x1, y1, x2, y2, score])

|

||||

bboxlist = np.array(bboxlist)

|

||||

if 0 == len(bboxlist):

|

||||

bboxlist = np.zeros((1, 5))

|

||||

|

||||

return bboxlist

|

||||

|

||||

def batch_detect(net, imgs, device):

|

||||

imgs = imgs - np.array([104, 117, 123])

|

||||

imgs = imgs.transpose(0, 3, 1, 2)

|

||||

|

||||

if 'cuda' in device:

|

||||

torch.backends.cudnn.benchmark = True

|

||||

|

||||

imgs = torch.from_numpy(imgs).float().to(device)

|

||||

BB, CC, HH, WW = imgs.size()

|

||||

with torch.no_grad():

|

||||

olist = net(imgs)

|

||||

|

||||

bboxlist = []

|

||||

for i in range(len(olist) // 2):

|

||||

olist[i * 2] = F.softmax(olist[i * 2], dim=1)

|

||||

olist = [oelem.data.cpu() for oelem in olist]

|

||||

for i in range(len(olist) // 2):

|

||||

ocls, oreg = olist[i * 2], olist[i * 2 + 1]

|

||||

FB, FC, FH, FW = ocls.size() # feature map size

|

||||

stride = 2**(i + 2) # 4,8,16,32,64,128

|

||||

anchor = stride * 4

|

||||

poss = zip(*np.where(ocls[:, 1, :, :] > 0.05))

|

||||

for Iindex, hindex, windex in poss:

|

||||

axc, ayc = stride / 2 + windex * stride, stride / 2 + hindex * stride

|

||||

score = ocls[:, 1, hindex, windex]

|

||||

loc = oreg[:, :, hindex, windex].contiguous().view(BB, 1, 4)

|

||||

priors = torch.Tensor([[axc / 1.0, ayc / 1.0, stride * 4 / 1.0, stride * 4 / 1.0]]).view(1, 1, 4)

|

||||

variances = [0.1, 0.2]

|

||||

box = batch_decode(loc, priors, variances)

|

||||

box = box[:, 0] * 1.0

|

||||

# cv2.rectangle(imgshow,(int(x1),int(y1)),(int(x2),int(y2)),(0,0,255),1)

|

||||

bboxlist.append(torch.cat([box, score.unsqueeze(1)], 1).cpu().numpy())

|

||||

bboxlist = np.array(bboxlist)

|

||||

if 0 == len(bboxlist):

|

||||

bboxlist = np.zeros((1, BB, 5))

|

||||

|

||||

return bboxlist

|

||||

|

||||

def flip_detect(net, img, device):

|

||||

img = cv2.flip(img, 1)

|

||||

b = detect(net, img, device)

|

||||

|

||||

bboxlist = np.zeros(b.shape)

|

||||

bboxlist[:, 0] = img.shape[1] - b[:, 2]

|

||||

bboxlist[:, 1] = b[:, 1]

|

||||

bboxlist[:, 2] = img.shape[1] - b[:, 0]

|

||||

bboxlist[:, 3] = b[:, 3]

|

||||

bboxlist[:, 4] = b[:, 4]

|

||||

return bboxlist

|

||||

|

||||

|

||||

def pts_to_bb(pts):

|

||||

min_x, min_y = np.min(pts, axis=0)

|

||||

max_x, max_y = np.max(pts, axis=0)

|

||||

return np.array([min_x, min_y, max_x, max_y])

|

||||

|

|

@ -0,0 +1,129 @@

|

|||

import torch

|

||||

import torch.nn as nn

|

||||

import torch.nn.functional as F

|

||||

|

||||

|

||||

class L2Norm(nn.Module):

|

||||

def __init__(self, n_channels, scale=1.0):

|

||||

super(L2Norm, self).__init__()

|

||||

self.n_channels = n_channels

|

||||

self.scale = scale

|

||||

self.eps = 1e-10

|

||||

self.weight = nn.Parameter(torch.Tensor(self.n_channels))

|

||||

self.weight.data *= 0.0

|

||||

self.weight.data += self.scale

|

||||

|

||||

def forward(self, x):

|

||||

norm = x.pow(2).sum(dim=1, keepdim=True).sqrt() + self.eps

|

||||

x = x / norm * self.weight.view(1, -1, 1, 1)

|

||||

return x

|

||||

|

||||

|

||||

class s3fd(nn.Module):

|

||||

def __init__(self):

|

||||

super(s3fd, self).__init__()

|

||||

self.conv1_1 = nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1)

|

||||

self.conv1_2 = nn.Conv2d(64, 64, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.conv2_1 = nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1)

|

||||

self.conv2_2 = nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.conv3_1 = nn.Conv2d(128, 256, kernel_size=3, stride=1, padding=1)

|

||||

self.conv3_2 = nn.Conv2d(256, 256, kernel_size=3, stride=1, padding=1)

|

||||

self.conv3_3 = nn.Conv2d(256, 256, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.conv4_1 = nn.Conv2d(256, 512, kernel_size=3, stride=1, padding=1)

|

||||

self.conv4_2 = nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1)

|

||||

self.conv4_3 = nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.conv5_1 = nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1)

|

||||

self.conv5_2 = nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1)

|

||||

self.conv5_3 = nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.fc6 = nn.Conv2d(512, 1024, kernel_size=3, stride=1, padding=3)

|

||||

self.fc7 = nn.Conv2d(1024, 1024, kernel_size=1, stride=1, padding=0)

|

||||

|

||||

self.conv6_1 = nn.Conv2d(1024, 256, kernel_size=1, stride=1, padding=0)

|

||||

self.conv6_2 = nn.Conv2d(256, 512, kernel_size=3, stride=2, padding=1)

|

||||

|

||||

self.conv7_1 = nn.Conv2d(512, 128, kernel_size=1, stride=1, padding=0)

|

||||

self.conv7_2 = nn.Conv2d(128, 256, kernel_size=3, stride=2, padding=1)

|

||||

|

||||

self.conv3_3_norm = L2Norm(256, scale=10)

|

||||

self.conv4_3_norm = L2Norm(512, scale=8)

|

||||

self.conv5_3_norm = L2Norm(512, scale=5)

|

||||

|

||||

self.conv3_3_norm_mbox_conf = nn.Conv2d(256, 4, kernel_size=3, stride=1, padding=1)

|

||||

self.conv3_3_norm_mbox_loc = nn.Conv2d(256, 4, kernel_size=3, stride=1, padding=1)

|

||||

self.conv4_3_norm_mbox_conf = nn.Conv2d(512, 2, kernel_size=3, stride=1, padding=1)

|

||||

self.conv4_3_norm_mbox_loc = nn.Conv2d(512, 4, kernel_size=3, stride=1, padding=1)

|

||||

self.conv5_3_norm_mbox_conf = nn.Conv2d(512, 2, kernel_size=3, stride=1, padding=1)

|

||||

self.conv5_3_norm_mbox_loc = nn.Conv2d(512, 4, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

self.fc7_mbox_conf = nn.Conv2d(1024, 2, kernel_size=3, stride=1, padding=1)

|

||||

self.fc7_mbox_loc = nn.Conv2d(1024, 4, kernel_size=3, stride=1, padding=1)

|

||||

self.conv6_2_mbox_conf = nn.Conv2d(512, 2, kernel_size=3, stride=1, padding=1)

|

||||

self.conv6_2_mbox_loc = nn.Conv2d(512, 4, kernel_size=3, stride=1, padding=1)

|

||||

self.conv7_2_mbox_conf = nn.Conv2d(256, 2, kernel_size=3, stride=1, padding=1)

|

||||

self.conv7_2_mbox_loc = nn.Conv2d(256, 4, kernel_size=3, stride=1, padding=1)

|

||||

|

||||

def forward(self, x):

|

||||

h = F.relu(self.conv1_1(x))

|

||||

h = F.relu(self.conv1_2(h))

|

||||

h = F.max_pool2d(h, 2, 2)

|

||||

|

||||

h = F.relu(self.conv2_1(h))

|

||||

h = F.relu(self.conv2_2(h))

|

||||

h = F.max_pool2d(h, 2, 2)

|

||||

|

||||

h = F.relu(self.conv3_1(h))

|

||||

h = F.relu(self.conv3_2(h))

|

||||

h = F.relu(self.conv3_3(h))

|

||||

f3_3 = h

|

||||

h = F.max_pool2d(h, 2, 2)

|

||||

|

||||

h = F.relu(self.conv4_1(h))

|

||||

h = F.relu(self.conv4_2(h))

|

||||

h = F.relu(self.conv4_3(h))

|

||||

f4_3 = h

|

||||

h = F.max_pool2d(h, 2, 2)

|

||||

|

||||

h = F.relu(self.conv5_1(h))

|

||||

h = F.relu(self.conv5_2(h))

|

||||

h = F.relu(self.conv5_3(h))

|

||||

f5_3 = h

|

||||

h = F.max_pool2d(h, 2, 2)

|

||||

|

||||

h = F.relu(self.fc6(h))

|

||||

h = F.relu(self.fc7(h))

|

||||

ffc7 = h

|

||||

h = F.relu(self.conv6_1(h))

|

||||

h = F.relu(self.conv6_2(h))

|

||||

f6_2 = h

|

||||

h = F.relu(self.conv7_1(h))

|

||||

h = F.relu(self.conv7_2(h))

|

||||

f7_2 = h

|

||||

|

||||

f3_3 = self.conv3_3_norm(f3_3)

|

||||

f4_3 = self.conv4_3_norm(f4_3)

|

||||

f5_3 = self.conv5_3_norm(f5_3)

|

||||

|

||||

cls1 = self.conv3_3_norm_mbox_conf(f3_3)

|

||||

reg1 = self.conv3_3_norm_mbox_loc(f3_3)

|

||||

cls2 = self.conv4_3_norm_mbox_conf(f4_3)

|

||||

reg2 = self.conv4_3_norm_mbox_loc(f4_3)

|

||||

cls3 = self.conv5_3_norm_mbox_conf(f5_3)

|

||||

reg3 = self.conv5_3_norm_mbox_loc(f5_3)

|

||||

cls4 = self.fc7_mbox_conf(ffc7)

|

||||

reg4 = self.fc7_mbox_loc(ffc7)

|

||||

cls5 = self.conv6_2_mbox_conf(f6_2)

|

||||

reg5 = self.conv6_2_mbox_loc(f6_2)

|

||||

cls6 = self.conv7_2_mbox_conf(f7_2)

|

||||

reg6 = self.conv7_2_mbox_loc(f7_2)

|

||||

|

||||

# max-out background label

|

||||

chunk = torch.chunk(cls1, 4, 1)

|

||||

bmax = torch.max(torch.max(chunk[0], chunk[1]), chunk[2])

|

||||

cls1 = torch.cat([bmax, chunk[3]], dim=1)

|

||||

|

||||

return [cls1, reg1, cls2, reg2, cls3, reg3, cls4, reg4, cls5, reg5, cls6, reg6]

|

||||

|

|

@ -0,0 +1,60 @@

|

|||

import os

|

||||

import cv2

|

||||

from torch.utils.model_zoo import load_url

|

||||

import modules.shared as shared

|

||||

from ..core import FaceDetector

|

||||

|

||||

from .net_s3fd import s3fd

|

||||

from .bbox import *

|

||||

from .detect import *

|

||||

|

||||

models_urls = {

|

||||

's3fd': 'https://www.adrianbulat.com/downloads/python-fan/s3fd-619a316812.pth',

|

||||

}

|

||||

|

||||

|

||||

class SFDDetector(FaceDetector):

|

||||

def __init__(self, device, path_to_detector=os.path.join(os.path.dirname(os.path.abspath(__file__)), 's3fd.pth'), verbose=False):

|

||||

super(SFDDetector, self).__init__(device, verbose)

|

||||

shared.cmd_opts.disable_safe_unpickle = True

|

||||

# Initialise the face detector

|

||||

if not os.path.isfile(path_to_detector):

|

||||

model_weights = load_url(models_urls['s3fd'])

|

||||

else:

|

||||

model_weights = torch.load(path_to_detector)

|

||||

|

||||

self.face_detector = s3fd()

|

||||

self.face_detector.load_state_dict(model_weights)

|

||||

self.face_detector.to(device)

|

||||

self.face_detector.eval()

|

||||

shared.cmd_opts.disable_safe_unpickle = False

|

||||

|

||||

def detect_from_image(self, tensor_or_path):

|

||||

image = self.tensor_or_path_to_ndarray(tensor_or_path)

|

||||

|

||||

bboxlist = detect(self.face_detector, image, device=self.device)

|

||||

keep = nms(bboxlist, 0.3)

|

||||

bboxlist = bboxlist[keep, :]

|

||||

bboxlist = [x for x in bboxlist if x[-1] > 0.5]

|

||||

|

||||

return bboxlist

|

||||

|

||||

def detect_from_batch(self, images):

|

||||

bboxlists = batch_detect(self.face_detector, images, device=self.device)

|

||||

keeps = [nms(bboxlists[:, i, :], 0.3) for i in range(bboxlists.shape[1])]

|

||||

bboxlists = [bboxlists[keep, i, :] for i, keep in enumerate(keeps)]

|

||||

bboxlists = [[x for x in bboxlist if x[-1] > 0.5] for bboxlist in bboxlists]

|

||||

|

||||

return bboxlists

|

||||

|

||||

@property

|

||||

def reference_scale(self):

|

||||

return 195

|

||||

|

||||

@property

|

||||

def reference_x_shift(self):

|

||||

return 0

|

||||

|

||||

@property

|

||||

def reference_y_shift(self):

|

||||

return 0

|

||||

|

|

@ -0,0 +1,261 @@

|

|||

import torch

|

||||

import torch.nn as nn

|

||||

import torch.nn.functional as F

|

||||

import math

|

||||

|

||||

|

||||

def conv3x3(in_planes, out_planes, strd=1, padding=1, bias=False):

|

||||

"3x3 convolution with padding"

|

||||