commit

5b87047b7d

36

README.md

36

README.md

|

|

@ -18,7 +18,7 @@ If you like the project, please give me a star! ⭐

|

|||

|

||||

The extension enables **large image drawing & upscaling with limited VRAM** via the following techniques:

|

||||

|

||||

1. Two SOTA diffusion tiling algorithms: [Mixture of Diffusers](https://github.com/albarji/mixture-of-diffusers) and [MultiDiffusion](https://multidiffusion.github.io), add [Demofusion](https://github.com/PRIS-CV/DemoFusion)

|

||||

1. SOTA diffusion tiling algorithms: [Mixture of Diffusers](https://github.com/albarji/mixture-of-diffusers) and [MultiDiffusion](https://multidiffusion.github.io), add [Demofusion](https://github.com/PRIS-CV/DemoFusion)

|

||||

2. My original Tiled VAE algorithm.

|

||||

3. My original TIled Noise Inversion for better upscaling.

|

||||

|

||||

|

|

@ -32,6 +32,7 @@ The extension enables **large image drawing & upscaling with limited VRAM** via

|

|||

- [x] [Regional Prompt Control](#region-prompt-control)

|

||||

- [x] [Img2img upscale](#img2img-upscale)

|

||||

- [x] [Ultra-Large image generation](#ultra-large-image-generation)

|

||||

- [x] [Demofusion available](#Demofusion-available)

|

||||

|

||||

=> Quickstart Tutorial: [Tutorial for multidiffusion upscaler for automatic1111](https://civitai.com/models/34726), thanks to [@PotatoBananaApple](https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111/discussions/120) 🎉

|

||||

|

||||

|

|

@ -186,6 +187,39 @@ The extension enables **large image drawing & upscaling with limited VRAM** via

|

|||

|

||||

****

|

||||

|

||||

### Demofusion available

|

||||

|

||||

ℹ The execution time of Demofusion will be relatively long, but it can obtain multiple images of different resolutions at once. Just like tilediffusion, please enable tilevae when an OOM error occurs.

|

||||

|

||||

ℹ Recommend using higher steps, such as 30 or more, for better results

|

||||

|

||||

ℹ If you set the image size to 512 * 512, the appropriate window size and overlap are 64 and 32 or smaller. If it is 1024, it is recommended to double it, and so on.

|

||||

|

||||

ℹ Recommend using a higher denoising strength in img2img, maybe 0.8-1,and try to use the original model, seeds, and prompt as much as possible

|

||||

|

||||

ℹ Do not enable it together with tilediffusion. It supports operations such as tilevae, noise inversion, etc.

|

||||

|

||||

ℹ Due to differences in implementation details, parameters such as c1, c2, c3 and sigma can refer to the [demofusion](https://ruoyidu.github.io/demofusion/demofusion.html), but may not be entirely effective sometimes. If there are blurred images, it is recommended to increase c3 and reduce Sigma.

|

||||

|

||||

|

||||

|

||||

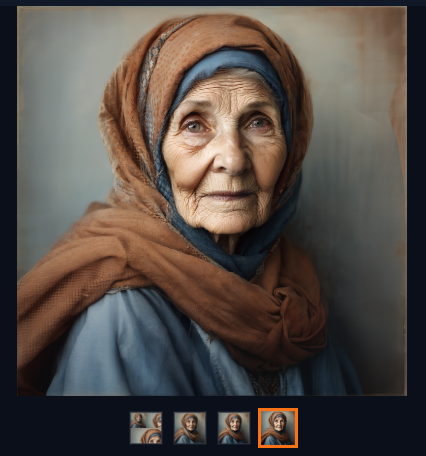

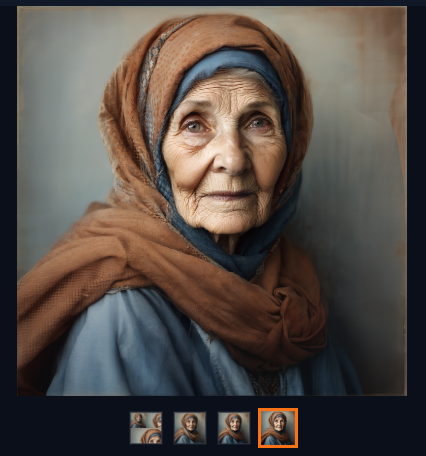

#### Example: txt2img, 1024 * 1024 image 3x upscale

|

||||

|

||||

- Params:

|

||||

|

||||

- Step=45, sampler=Euler, same prompt as official demofusion, random seed 35, model SDXL1.0

|

||||

|

||||

- Denoising Strength for Substage = 0.85, Sigma=0.5,use default values for the rest,enable tilevae

|

||||

|

||||

- Device: 4060ti 16GB

|

||||

- The following are the images obtained at a resolution of 1024, 2048, and 3072

|

||||

|

||||

|

||||

|

||||

****

|

||||

|

||||

###

|

||||

|

||||

## Installation

|

||||

|

||||

⚪ Method 1: Official Market

|

||||

|

|

|

|||

33

README_CN.md

33

README_CN.md

|

|

@ -1,4 +1,4 @@

|

|||

# 用 Tiled Diffusion & VAE 生成大型图像

|

||||

用 Tiled Diffusion & VAE 生成大型图像

|

||||

|

||||

[![CC 署名-非商用-相同方式共享 4.0][cc-by-nc-sa-shield]][cc-by-nc-sa]

|

||||

|

||||

|

|

@ -28,6 +28,7 @@

|

|||

- [x] [区域提示控制](#区域提示控制)

|

||||

- [x] [Img2img 放大](#img2img-放大)

|

||||

- [x] [生成超大图像](#生成超大图像)

|

||||

- [x] [Demofusion现已可用](#Demofusion现已可用)

|

||||

|

||||

=> 快速入门教程: [Tutorial for multidiffusion upscaler for automatic1111](https://civitai.com/models/34726), 感谢由 [@PotatoBananaApple](https://github.com/pkuliyi2015/multidiffusion-upscaler-for-automatic1111/discussions/120) 提供 🎉

|

||||

|

||||

|

|

@ -181,6 +182,36 @@

|

|||

|

||||

****

|

||||

|

||||

### Demofusion现已可用

|

||||

|

||||

ℹ Demofusion的执行时间会比较长,不过可以一次得到多张不同分辨率的图片。与tilediffusion一样,出现OOM错误时请开启tilevae

|

||||

|

||||

ℹ推荐使用较高的步数,例如30步以上,效果会更好

|

||||

|

||||

ℹ如果你设定的生图大小是512*512, 合适的window size和overlap是64和32或者更小。如果是1024则推荐翻倍,以此类推

|

||||

|

||||

ℹimg2img推荐使用较高的重绘幅度,比如0.8-1,并尽可能地使用原图的生图模型、随机种子以及prompt等

|

||||

|

||||

ℹ不要同时开启tilediffusion. 但该组件支持tilevae、noise inversion等常用功能

|

||||

|

||||

ℹ由于实现细节的差异,c1,c2,c3等参数可以参考[demofusion](https://ruoyidu.github.io/demofusion/demofusion.html)但不一定完全奏效. 如果出现模糊图像建议提高c3并降低Sigma

|

||||

|

||||

|

||||

|

||||

#### 示例 txt2img 1024*1024图片 3倍放大

|

||||

|

||||

- 参数:

|

||||

- 步数 = 45,采样器 = Euler,与demofusion示例同样的提示语,随机种子35,模型为SDXL1.0

|

||||

- 子阶段降噪 = 0.85, Sigma=0.5,其余采用默认值,开启tilevae

|

||||

- 设备 4060ti 16GB

|

||||

- 以下为获得的1024,2048,3072分辨率的图片

|

||||

|

||||

|

||||

|

||||

****

|

||||

|

||||

###

|

||||

|

||||

## 安装

|

||||

|

||||

⚪ 方法 1: 官方市场

|

||||

|

|

|

|||

|

|

@ -369,7 +369,7 @@ class Script(scripts.Script):

|

|||

info["Region control"] = region_info

|

||||

Script.create_random_tensors_original_md = processing.create_random_tensors

|

||||

processing.create_random_tensors = lambda *args, **kwargs: self.create_random_tensors_hijack(

|

||||

bbox_settings, region_info,

|

||||

bbox_settings, region_info,

|

||||

*args, **kwargs,

|

||||

)

|

||||

|

||||

|

|

|

|||

|

|

@ -13,12 +13,27 @@ from modules.ui import gr_show

|

|||

from tile_methods.abstractdiffusion import AbstractDiffusion

|

||||

from tile_methods.demofusion import DemoFusion

|

||||

from tile_utils.utils import *

|

||||

from modules.sd_samplers_common import InterruptedException

|

||||

# import k_diffusion.sampling

|

||||

|

||||

|

||||

CFG_PATH = os.path.join(scripts.basedir(), 'region_configs')

|

||||

BBOX_MAX_NUM = min(getattr(shared.cmd_opts, 'md_max_regions', 8), 16)

|

||||

|

||||

|

||||

def create_infotext_hijack(p, all_prompts, all_seeds, all_subseeds, comments=None, iteration=0, position_in_batch=0, use_main_prompt=False, index=-1, all_negative_prompts=None):

|

||||

idx = index

|

||||

if index == -1:

|

||||

idx = None

|

||||

text = processing.create_infotext_ori(p, all_prompts, all_seeds, all_subseeds, comments, iteration, position_in_batch, use_main_prompt, idx, all_negative_prompts)

|

||||

start_index = text.find("Size")

|

||||

if start_index != -1:

|

||||

r_text = f"Size:{p.width_list[index]}x{p.height_list[index]}"

|

||||

end_index = text.find(",", start_index)

|

||||

if end_index != -1:

|

||||

replaced_string = text[:start_index] + r_text + text[end_index:]

|

||||

return replaced_string

|

||||

return text

|

||||

|

||||

class Script(scripts.Script):

|

||||

def __init__(self):

|

||||

|

|

@ -41,55 +56,45 @@ class Script(scripts.Script):

|

|||

|

||||

with gr.Accordion('DemoFusion', open=False, elem_id=f'MD-{tab}'):

|

||||

with gr.Row(variant='compact') as tab_enable:

|

||||

enabled = gr.Checkbox(label='Enable DemoFusion(Do not open it with tilediffusion)', value=False, elem_id=uid('enabled'))

|

||||

# overwrite_size = gr.Checkbox(label='Overwrite image size', value=False, visible=not is_img2img, elem_id=uid('overwrite-image-size'))

|

||||

keep_input_size = gr.Checkbox(label='Keep input image size', value=True, visible=is_img2img, elem_id=uid('keep-input-size'))

|

||||

random_jitter = gr.Checkbox(label='Random jitter windows', value=True, elem_id=uid('random-jitter'))

|

||||

enabled = gr.Checkbox(label='Enable DemoFusion(Dont open with tilediffusion)', value=False, elem_id=uid('enabled'))

|

||||

random_jitter = gr.Checkbox(label='Random Jitter', value = True, elem_id=uid('random-jitter'))

|

||||

gaussian_filter = gr.Checkbox(label='Gaussian Filter', value=True, visible=False, elem_id=uid('gaussian'))

|

||||

keep_input_size = gr.Checkbox(label='Keep input-image size', value=False,visible=is_img2img, elem_id=uid('keep-input-size'))

|

||||

|

||||

# with gr.Row(variant='compact', visible=False) as tab_size:

|

||||

# image_width = gr.Slider(minimum=256, maximum=16384, step=16, label='Image width', value=1024, elem_id=f'MD-overwrite-width-{tab}')

|

||||

# image_height = gr.Slider(minimum=256, maximum=16384, step=16, label='Image height', value=1024, elem_id=f'MD-overwrite-height-{tab}')

|

||||

# overwrite_size.change(fn=gr_show, inputs=overwrite_size, outputs=tab_size, show_progress=False)

|

||||

|

||||

# with gr.Row(variant='compact', visible=True) as tab_size:

|

||||

# c1 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c1', value=3, elem_id=f'c1-{tab}')

|

||||

# c2 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c2', value=1, elem_id=f'c2-{tab}')

|

||||

# c3 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c3', value=1, elem_id=f'c3-{tab}')

|

||||

|

||||

with gr.Row(variant='compact') as tab_param:

|

||||

method = gr.Dropdown(label='Method', choices=[Method_2.DEMO_FU.value], value=Method_2.DEMO_FU.value, elem_id=uid('method-2'))

|

||||

control_tensor_cpu = gr.Checkbox(label='Move ControlNet tensor to CPU (if applicable)', value=False, elem_id=uid('control-tensor-cpu-2'))

|

||||

method = gr.Dropdown(label='Method', choices=[Method_2.DEMO_FU.value], value=Method_2.DEMO_FU.value, visible= False, elem_id=uid('method'))

|

||||

control_tensor_cpu = gr.Checkbox(label='Move ControlNet tensor to CPU (if applicable)', value=False, elem_id=uid('control-tensor-cpu'))

|

||||

reset_status = gr.Button(value='Free GPU', variant='tool')

|

||||

reset_status.click(fn=self.reset_and_gc, show_progress=False)

|

||||

|

||||

with gr.Group() as tab_tile:

|

||||

with gr.Row(variant='compact'):

|

||||

window_size = gr.Slider(minimum=16, maximum=256, step=16, label='Latent window size', value=128, elem_id=uid('latent-window-size'))

|

||||

# tile_height = gr.Slider(minimum=16, maximum=256, step=16, label='Latent tile height', value=96, elem_id=uid('latent-tile-height'))

|

||||

|

||||

with gr.Row(variant='compact'):

|

||||

overlap = gr.Slider(minimum=0, maximum=256, step=4, label='Latent window overlap', value=64, elem_id=uid('latent-tile-overlap-2'))

|

||||

batch_size = gr.Slider(minimum=1, maximum=8, step=1, label='Latent window batch size', value=4, elem_id=uid('latent-tile-batch-size-2'))

|

||||

|

||||

with gr.Row(variant='compact', visible=True) as tab_size:

|

||||

c1 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c1', value=3, elem_id=f'c1-{tab}')

|

||||

c2 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c2', value=1, elem_id=f'c2-{tab}')

|

||||

c3 = gr.Slider(minimum=0.5, maximum=3, step=0.1, label='c3', value=1, visible=False, elem_id=f'c3-{tab}') #XXX:this parameter is useless in current version

|

||||

|

||||

overlap = gr.Slider(minimum=0, maximum=256, step=4, label='Latent window overlap', value=64, elem_id=uid('latent-tile-overlap'))

|

||||

batch_size = gr.Slider(minimum=1, maximum=8, step=1, label='Latent window batch size', value=4, elem_id=uid('latent-tile-batch-size'))

|

||||

with gr.Row(variant='compact', visible=True) as tab_c:

|

||||

c1 = gr.Slider(minimum=0, maximum=5, step=0.01, label='Cosine Scale 1', value=3, elem_id=f'C1-{tab}')

|

||||

c2 = gr.Slider(minimum=0, maximum=5, step=0.01, label='Cosine Scale 2', value=1, elem_id=f'C2-{tab}')

|

||||

c3 = gr.Slider(minimum=0, maximum=5, step=0.01, label='Cosine Scale 3', value=1, elem_id=f'C3-{tab}')

|

||||

sigma = gr.Slider(minimum=0, maximum=1, step=0.01, label='Sigma', value=0.5, elem_id=f'Sigma-{tab}')

|

||||

with gr.Group() as tab_denoise:

|

||||

strength = gr.Slider(minimum=0, maximum=1, step=0.01, value = 0.85,label='Denoising Strength for Substage',visible=not is_img2img, elem_id=f'strength-{tab}')

|

||||

with gr.Row(variant='compact') as tab_upscale:

|

||||

# upscaler_name = gr.Dropdown(label='Upscaler', choices=[x.name for x in shared.sd_upscalers], value='None', elem_id=uid('upscaler-index'))

|

||||

scale_factor = gr.Slider(minimum=1.0, maximum=8.0, step=1, label='Scale_Factor', value=2.0, elem_id=uid('upscaler-factor-2'))

|

||||

# scale_factor = gr.Slider(minimum=1.0, maximum=8.0, step=1, label='Overwrite Scale Factor', value=2.0,value=is_img2img, elem_id=uid('upscaler-factor'))

|

||||

scale_factor = gr.Slider(minimum=1.0, maximum=8.0, step=1, label='Scale Factor', value=2.0, elem_id=uid('upscaler-factor'))

|

||||

|

||||

|

||||

with gr.Accordion('Noise Inversion', open=True, visible=is_img2img) as tab_noise_inv:

|

||||

with gr.Row(variant='compact'):

|

||||

noise_inverse = gr.Checkbox(label='Enable Noise Inversion', value=False, elem_id=uid('noise-inverse-2'))

|

||||

noise_inverse_steps = gr.Slider(minimum=1, maximum=200, step=1, label='Inversion steps', value=10, elem_id=uid('noise-inverse-steps-2'))

|

||||

noise_inverse = gr.Checkbox(label='Enable Noise Inversion', value=False, elem_id=uid('noise-inverse'))

|

||||

noise_inverse_steps = gr.Slider(minimum=1, maximum=200, step=1, label='Inversion steps', value=10, elem_id=uid('noise-inverse-steps'))

|

||||

gr.HTML('<p>Please test on small images before actual upscale. Default params require denoise <= 0.6</p>')

|

||||

with gr.Row(variant='compact'):

|

||||

noise_inverse_retouch = gr.Slider(minimum=1, maximum=100, step=0.1, label='Retouch', value=1, elem_id=uid('noise-inverse-retouch-2'))

|

||||

noise_inverse_renoise_strength = gr.Slider(minimum=0, maximum=2, step=0.01, label='Renoise strength', value=1, elem_id=uid('noise-inverse-renoise-strength-2'))

|

||||

noise_inverse_renoise_kernel = gr.Slider(minimum=2, maximum=512, step=1, label='Renoise kernel size', value=64, elem_id=uid('noise-inverse-renoise-kernel-2'))

|

||||

noise_inverse_retouch = gr.Slider(minimum=1, maximum=100, step=0.1, label='Retouch', value=1, elem_id=uid('noise-inverse-retouch'))

|

||||

noise_inverse_renoise_strength = gr.Slider(minimum=0, maximum=2, step=0.01, label='Renoise strength', value=1, elem_id=uid('noise-inverse-renoise-strength'))

|

||||

noise_inverse_renoise_kernel = gr.Slider(minimum=2, maximum=512, step=1, label='Renoise kernel size', value=64, elem_id=uid('noise-inverse-renoise-kernel'))

|

||||

|

||||

# The control includes txt2img and img2img, we use t2i and i2i to distinguish them

|

||||

|

||||

|

|

@ -101,7 +106,7 @@ class Script(scripts.Script):

|

|||

noise_inverse, noise_inverse_steps, noise_inverse_retouch, noise_inverse_renoise_strength, noise_inverse_renoise_kernel,

|

||||

control_tensor_cpu,

|

||||

random_jitter,

|

||||

c1,c2,c3

|

||||

c1,c2,c3,gaussian_filter,strength,sigma

|

||||

]

|

||||

|

||||

|

||||

|

|

@ -113,7 +118,7 @@ class Script(scripts.Script):

|

|||

noise_inverse: bool, noise_inverse_steps: int, noise_inverse_retouch: float, noise_inverse_renoise_strength: float, noise_inverse_renoise_kernel: int,

|

||||

control_tensor_cpu: bool,

|

||||

random_jitter:bool,

|

||||

c1,c2,c3

|

||||

c1,c2,c3,gaussian_filter,strength,sigma

|

||||

):

|

||||

|

||||

# unhijack & unhook, in case it broke at last time

|

||||

|

|

@ -129,6 +134,7 @@ class Script(scripts.Script):

|

|||

p.width_original_md = p.width

|

||||

p.height_original_md = p.height

|

||||

p.current_scale_num = 1

|

||||

p.gaussian_filter = gaussian_filter

|

||||

p.scale_factor = int(scale_factor)

|

||||

|

||||

is_img2img = hasattr(p, "init_images") and len(p.init_images) > 0

|

||||

|

|

@ -136,16 +142,17 @@ class Script(scripts.Script):

|

|||

init_img = p.init_images[0]

|

||||

init_img = images.flatten(init_img, opts.img2img_background_color)

|

||||

image = init_img

|

||||

if keep_input_size: #若 scale factor为1则为真

|

||||

p.scale_factor = 1

|

||||

if keep_input_size:

|

||||

p.width = image.width

|

||||

p.height = image.height

|

||||

p.width_original_md = p.width

|

||||

p.height_original_md = p.height

|

||||

else: #XXX:To adapt to noise inversion, we do not multiply the scale factor here

|

||||

p.width = p.width_original_md

|

||||

p.height = p.height_original_md

|

||||

else: # txt2img

|

||||

p.width = p.width*(p.scale_factor)

|

||||

p.height = p.height*(p.scale_factor)

|

||||

p.width = p.width_original_md

|

||||

p.height = p.height_original_md

|

||||

|

||||

if 'png info':

|

||||

info = {}

|

||||

|

|

@ -198,7 +205,14 @@ class Script(scripts.Script):

|

|||

|

||||

p.sample = lambda conditioning, unconditional_conditioning,seeds, subseeds, subseed_strength, prompts: self.sample_hijack(

|

||||

conditioning, unconditional_conditioning, seeds, subseeds, subseed_strength, prompts,p, is_img2img,

|

||||

window_size, overlap, tile_batch_size,random_jitter,c1,c2,c3)

|

||||

window_size, overlap, tile_batch_size,random_jitter,c1,c2,c3,strength,sigma)

|

||||

|

||||

processing.create_infotext_ori = processing.create_infotext

|

||||

|

||||

p.width_list = [p.height]

|

||||

p.height_list = [p.height]

|

||||

|

||||

processing.create_infotext = create_infotext_hijack

|

||||

## end

|

||||

|

||||

|

||||

|

|

@ -207,6 +221,20 @@ class Script(scripts.Script):

|

|||

|

||||

if self.delegate is not None: self.delegate.reset_controlnet_tensors()

|

||||

|

||||

def postprocess_batch_list(self, p, pp, *args, **kwargs):

|

||||

for idx,image in enumerate(pp.images):

|

||||

idx_b = idx//p.batch_size

|

||||

pp.images[idx] = image[:,:image.shape[1]//(p.scale_factor)*(idx_b+1),:image.shape[2]//(p.scale_factor)*(idx_b+1)]

|

||||

p.seeds = [item for _ in range(p.scale_factor) for item in p.seeds]

|

||||

p.prompts = [item for _ in range(p.scale_factor) for item in p.prompts]

|

||||

p.all_negative_prompts = [item for _ in range(p.scale_factor) for item in p.all_negative_prompts]

|

||||

p.negative_prompts = [item for _ in range(p.scale_factor) for item in p.negative_prompts]

|

||||

if p.color_corrections != None:

|

||||

p.color_corrections = [item for _ in range(p.scale_factor) for item in p.color_corrections]

|

||||

p.width_list = [item*(idx+1) for idx in range(p.scale_factor) for item in [p.width for _ in range(p.batch_size)]]

|

||||

p.height_list = [item*(idx+1) for idx in range(p.scale_factor) for item in [p.height for _ in range(p.batch_size)]]

|

||||

return

|

||||

|

||||

def postprocess(self, p: Processing, processed, enabled, *args):

|

||||

if not enabled: return

|

||||

# unhijack & unhook

|

||||

|

|

@ -226,32 +254,28 @@ class Script(scripts.Script):

|

|||

|

||||

''' ↓↓↓ inner API hijack ↓↓↓ '''

|

||||

@torch.no_grad()

|

||||

def sample_hijack(self, conditioning, unconditional_conditioning,seeds, subseeds, subseed_strength, prompts,p,image_ori,window_size, overlap, tile_batch_size,random_jitter,c1,c2,c3):

|

||||

|

||||

if self.delegate==None:

|

||||

p.denoising_strength=1

|

||||

# p.sampler = Script.create_sampler_original_md(p.sampler_name, p.sd_model)

|

||||

p.sampler = sd_samplers.create_sampler(p.sampler_name, p.sd_model) #NOTE:Wrong but very useful. If corrected, please replace with the content from the previous line

|

||||

# 3. Encode input prompts

|

||||

shared.state.sampling_step = 0

|

||||

noise = p.rng.next()

|

||||

|

||||

if hasattr(p,'initial_noise_multiplier'):

|

||||

if p.initial_noise_multiplier != 1.0:

|

||||

p.extra_generation_params["Noise multiplier"] = p.initial_noise_multiplier

|

||||

noise *= p.initial_noise_multiplier

|

||||

|

||||

def sample_hijack(self, conditioning, unconditional_conditioning,seeds, subseeds, subseed_strength, prompts,p,image_ori,window_size, overlap, tile_batch_size,random_jitter,c1,c2,c3,strength,sigma):

|

||||

################################################## Phase Initialization ######################################################

|

||||

|

||||

if not image_ori:

|

||||

latents = p.rng.next() #Same with line 233. Replaced with the following lines

|

||||

# latents = p.sampler.sample(p, x, conditioning, unconditional_conditioning, image_conditioning=p.txt2img_image_conditioning(x))

|

||||

# del x

|

||||

# p.denoising_strength=1

|

||||

# p.sampler = sd_samplers.create_sampler(p.sampler_name, p.sd_model)

|

||||

p.current_step = 0

|

||||

p.denoising_strength = strength

|

||||

# p.sampler = sd_samplers.create_sampler(p.sampler_name, p.sd_model) #NOTE:Wrong but very useful. If corrected, please replace with the content with the following lines

|

||||

# latents = p.rng.next()

|

||||

|

||||

p.sampler = Script.create_sampler_original_md(p.sampler_name, p.sd_model) #scale

|

||||

x = p.rng.next()

|

||||

print("### Phase 1 Denoising ###")

|

||||

latents = p.sampler.sample(p, x, conditioning, unconditional_conditioning, image_conditioning=p.txt2img_image_conditioning(x))

|

||||

latents_ = F.pad(latents, (0, latents.shape[3]*(p.scale_factor-1), 0, latents.shape[2]*(p.scale_factor-1)))

|

||||

res = latents_

|

||||

del x

|

||||

p.sampler = sd_samplers.create_sampler(p.sampler_name, p.sd_model)

|

||||

starting_scale = 2

|

||||

else: # img2img

|

||||

print("### Encoding Real Image ###")

|

||||

latents = p.init_latent

|

||||

starting_scale = 1

|

||||

|

||||

|

||||

anchor_mean = latents.mean()

|

||||

|

|

@ -260,10 +284,10 @@ class Script(scripts.Script):

|

|||

devices.torch_gc()

|

||||

|

||||

####################################################### Phase Upscaling #####################################################

|

||||

starting_scale = 1

|

||||

p.cosine_scale_1 = c1 # 3

|

||||

p.cosine_scale_2 = c2 # 1

|

||||

p.cosine_scale_3 = c3 # 1

|

||||

p.cosine_scale_1 = c1

|

||||

p.cosine_scale_2 = c2

|

||||

p.cosine_scale_3 = c3

|

||||

self.delegate.sig = sigma

|

||||

p.latents = latents

|

||||

for current_scale_num in range(starting_scale, p.scale_factor+1):

|

||||

p.current_scale_num = current_scale_num

|

||||

|

|

@ -283,7 +307,7 @@ class Script(scripts.Script):

|

|||

|

||||

info = ', '.join([

|

||||

# f"{method.value} hooked into {name!r} sampler",

|

||||

f"Tile size: {window_size}",

|

||||

f"Tile size: {self.delegate.window_size}",

|

||||

f"Tile count: {self.delegate.num_tiles}",

|

||||

f"Batch size: {self.delegate.tile_bs}",

|

||||

f"Tile batches: {len(self.delegate.batched_bboxes)}",

|

||||

|

|

@ -301,7 +325,7 @@ class Script(scripts.Script):

|

|||

|

||||

p.noise = noise

|

||||

p.x = p.latents.clone()

|

||||

p.current_step=-1

|

||||

p.current_step=0

|

||||

|

||||

p.latents = p.sampler.sample_img2img(p,p.latents, noise , conditioning, unconditional_conditioning, image_conditioning=p.image_conditioning)

|

||||

if self.flag_noise_inverse:

|

||||

|

|

@ -309,10 +333,26 @@ class Script(scripts.Script):

|

|||

self.flag_noise_inverse = False

|

||||

|

||||

p.latents = (p.latents - p.latents.mean()) / p.latents.std() * anchor_std + anchor_mean

|

||||

latents_ = F.pad(p.latents, (0, p.latents.shape[3]//current_scale_num*(p.scale_factor-current_scale_num), 0, p.latents.shape[2]//current_scale_num*(p.scale_factor-current_scale_num)))

|

||||

if current_scale_num==1:

|

||||

res = latents_

|

||||

else:

|

||||

res = torch.concatenate((res,latents_),axis=0)

|

||||

|

||||

#########################################################################################################################################

|

||||

p.width = p.width*p.scale_factor

|

||||

p.height = p.height*p.scale_factor

|

||||

return p.latents

|

||||

|

||||

return res

|

||||

|

||||

@staticmethod

|

||||

def callback_hijack(self_sampler,d,p):

|

||||

p.current_step = d['i']

|

||||

|

||||

if self_sampler.stop_at is not None and p.current_step > self_sampler.stop_at:

|

||||

raise InterruptedException

|

||||

|

||||

state.sampling_step = p.current_step

|

||||

shared.total_tqdm.update()

|

||||

p.current_step += 1

|

||||

|

||||

|

||||

def create_sampler_hijack(

|

||||

|

|

@ -329,6 +369,8 @@ class Script(scripts.Script):

|

|||

return self.delegate.sampler_raw

|

||||

else:

|

||||

self.reset()

|

||||

sd_samplers_common.Sampler.callback_ori = sd_samplers_common.Sampler.callback_state

|

||||

sd_samplers_common.Sampler.callback_state = lambda self_sampler,d:Script.callback_hijack(self_sampler,d,p)

|

||||

|

||||

self.flag_noise_inverse = hasattr(p, "init_images") and len(p.init_images) > 0 and noise_inverse

|

||||

flag_noise_inverse = self.flag_noise_inverse

|

||||

|

|

@ -343,7 +385,7 @@ class Script(scripts.Script):

|

|||

else: raise NotImplementedError(f"Method {method} not implemented.")

|

||||

|

||||

delegate = delegate_cls(p, sampler)

|

||||

delegate.window_size = window_size

|

||||

delegate.window_size = min(min(window_size,p.width//8),p.height//8)

|

||||

p.random_jitter = random_jitter

|

||||

|

||||

if flag_noise_inverse:

|

||||

|

|

@ -365,7 +407,7 @@ class Script(scripts.Script):

|

|||

|

||||

info = ', '.join([

|

||||

f"{method.value} hooked into {name!r} sampler",

|

||||

f"Tile size: {window_size}",

|

||||

f"Tile size: {delegate.window_size}",

|

||||

f"Tile count: {delegate.num_tiles}",

|

||||

f"Batch size: {delegate.tile_bs}",

|

||||

f"Tile batches: {len(delegate.batched_bboxes)}",

|

||||

|

|

@ -424,8 +466,6 @@ class Script(scripts.Script):

|

|||

org_random_tensors = torch.where(background_noise_count > 0, background_noise, org_random_tensors)

|

||||

org_random_tensors = torch.where(foreground_noise_count > 0, foreground_noise, org_random_tensors)

|

||||

return org_random_tensors

|

||||

# p.sd_model.sd_model_hash改为p.sd_model_hash

|

||||

''' ↓↓↓ helper methods ↓↓↓ '''

|

||||

|

||||

''' ↓↓↓ helper methods ↓↓↓ '''

|

||||

|

||||

|

|

@ -485,6 +525,12 @@ class Script(scripts.Script):

|

|||

if hasattr(Script, "create_random_tensors_original_md"):

|

||||

processing.create_random_tensors = Script.create_random_tensors_original_md

|

||||

del Script.create_random_tensors_original_md

|

||||

if hasattr(sd_samplers_common.Sampler, "callback_ori"):

|

||||

sd_samplers_common.Sampler.callback_state = sd_samplers_common.Sampler.callback_ori

|

||||

del sd_samplers_common.Sampler.callback_ori

|

||||

if hasattr(processing, "create_infotext_ori"):

|

||||

processing.create_infotext = processing.create_infotext_ori

|

||||

del processing.create_infotext_ori

|

||||

DemoFusion.unhook()

|

||||

self.delegate = None

|

||||

|

||||

|

|

|

|||

|

|

@ -104,7 +104,7 @@ class AbstractDiffusion:

|

|||

def init_done(self):

|

||||

'''

|

||||

Call this after all `init_*`, settings are done, now perform:

|

||||

- settings sanity check

|

||||

- settings sanity check

|

||||

- pre-computations, cache init

|

||||

- anything thing needed before denoising starts

|

||||

'''

|

||||

|

|

@ -214,7 +214,7 @@ class AbstractDiffusion:

|

|||

h = min(self.h - y, h)

|

||||

self.custom_bboxes.append(CustomBBox(x, y, w, h, p, n, blend_mode, feather_ratio, seed))

|

||||

|

||||

if len(self.custom_bboxes) == 0:

|

||||

if len(self.custom_bboxes) == 0:

|

||||

self.enable_custom_bbox = False

|

||||

return

|

||||

|

||||

|

|

|

|||

|

|

@ -17,25 +17,17 @@ class DemoFusion(AbstractDiffusion):

|

|||

super().__init__(p, *args, **kwargs)

|

||||

assert p.sampler_name != 'UniPC', 'Demofusion is not compatible with UniPC!'

|

||||

|

||||

def add_one(self):

|

||||

self.p.current_step += 1

|

||||

return

|

||||

|

||||

|

||||

def hook(self):

|

||||

steps, self.t_enc = sd_samplers_common.setup_img2img_steps(self.p, None)

|

||||

# print("ENC",self.t_enc)

|

||||

|

||||

self.sampler.model_wrap_cfg.forward_ori = self.sampler.model_wrap_cfg.forward

|

||||

self.sampler.model_wrap_cfg.forward = self.forward_one_step

|

||||

self.sampler_forward = self.sampler.model_wrap_cfg.inner_model.forward

|

||||

self.sampler.model_wrap_cfg.forward = self.forward_one_step

|

||||

if self.is_kdiff:

|

||||

self.sampler: KDiffusionSampler

|

||||

self.sampler.model_wrap_cfg: CFGDenoiserKDiffusion

|

||||

self.sampler.model_wrap_cfg.inner_model: Union[CompVisDenoiser, CompVisVDenoiser]

|

||||

sigmas = self.sampler.get_sigmas(self.p, steps)

|

||||

# print("SIGMAS:",sigmas)

|

||||

self.p.sigmas = sigmas[steps - self.t_enc - 1:]

|

||||

else:

|

||||

self.sampler: CompVisSampler

|

||||

self.sampler.model_wrap_cfg: CFGDenoiserTimesteps

|

||||

|

|

@ -108,17 +100,14 @@ class DemoFusion(AbstractDiffusion):

|

|||

cols=1

|

||||

dx = (w_l - tile_w) / (cols - 1) if cols > 1 else 0

|

||||

dy = (h_l - tile_h) / (rows - 1) if rows > 1 else 0

|

||||

if self.p.random_jitter:

|

||||

self.jitter_range = max((min(self.w, self.h)-self.stride)//4,0)

|

||||

else:

|

||||

self.jitter_range=0

|

||||

bbox_list: List[BBox] = []

|

||||

self.jitter_range = 0

|

||||

for row in range(rows):

|

||||

for col in range(cols):

|

||||

h = min(int(row * dy), h_l - tile_h)

|

||||

w = min(int(col * dx), w_l - tile_w)

|

||||

if self.p.random_jitter:

|

||||

self.jitter_range = min(max((min(self.w, self.h)-self.stride)//4,0),int(self.stride/2))

|

||||

self.jitter_range = min(max((min(self.w, self.h)-self.stride)//4,0),min(int(self.window_size/2),int(self.overlap/2)))

|

||||

jitter_range = self.jitter_range

|

||||

w_jitter = 0

|

||||

h_jitter = 0

|

||||

|

|

@ -149,12 +138,11 @@ class DemoFusion(AbstractDiffusion):

|

|||

|

||||

self.overlap = max(0, min(overlap, self.window_size - 4))

|

||||

|

||||

self.stride = max(1,self.window_size - self.overlap)

|

||||

self.stride = max(4,self.window_size - self.overlap)

|

||||

|

||||

# split the latent into overlapped tiles, then batching

|

||||

# weights basically indicate how many times a pixel is painted

|

||||

bboxes, _ = self.split_bboxes_jitter(self.w, self.h, self.tile_w, self.tile_h, overlap, self.get_tile_weights())

|

||||

print("BBOX:",len(bboxes))

|

||||

bboxes, _ = self.split_bboxes_jitter(self.w, self.h, self.tile_w, self.tile_h, self.overlap, self.get_tile_weights())

|

||||

self.num_tiles = len(bboxes)

|

||||

self.num_batches = math.ceil(self.num_tiles / tile_bs)

|

||||

self.tile_bs = math.ceil(len(bboxes) / self.num_batches) # optimal_batch_size

|

||||

|

|

@ -181,82 +169,47 @@ class DemoFusion(AbstractDiffusion):

|

|||

blurred_latents = F.conv2d(latents, kernel, padding=kernel_size//2, groups=channels)

|

||||

|

||||

return blurred_latents

|

||||

|

||||

|

||||

|

||||

''' ↓↓↓ kernel hijacks ↓↓↓ '''

|

||||

@torch.no_grad()

|

||||

@keep_signature

|

||||

def forward_one_step(self, x_in, sigma, **kwarg):

|

||||

self.add_one()

|

||||

if self.is_kdiff:

|

||||

self.xi = self.p.x + self.p.noise * self.p.sigmas[self.p.current_step]

|

||||

x_noisy = self.p.x + self.p.noise * sigma[0]

|

||||

else:

|

||||

alphas_cumprod = self.p.sd_model.alphas_cumprod

|

||||

sqrt_alpha_cumprod = torch.sqrt(alphas_cumprod[self.timesteps[self.t_enc-self.p.current_step]])

|

||||

sqrt_one_minus_alpha_cumprod = torch.sqrt(1 - alphas_cumprod[self.timesteps[self.t_enc-self.p.current_step]])

|

||||

self.xi = self.p.x*sqrt_alpha_cumprod + self.p.noise * sqrt_one_minus_alpha_cumprod

|

||||

x_noisy = self.p.x*sqrt_alpha_cumprod + self.p.noise * sqrt_one_minus_alpha_cumprod

|

||||

|

||||

self.cosine_factor = 0.5 * (1 + torch.cos(torch.pi *torch.tensor(((self.p.current_step + 1) / (self.t_enc+1)))))

|

||||

c2 = self.cosine_factor**self.p.cosine_scale_2

|

||||

|

||||

self.c1 = self.cosine_factor ** self.p.cosine_scale_1

|

||||

c1 = self.cosine_factor ** self.p.cosine_scale_1

|

||||

|

||||

self.x_in_tmp = x_in*(1 - self.c1) + self.xi * self.c1

|

||||

x_in = x_in*(1 - c1) + x_noisy * c1

|

||||

|

||||

if self.p.random_jitter:

|

||||

jitter_range = self.jitter_range

|

||||

else:

|

||||

jitter_range = 0

|

||||

self.x_in_tmp_ = F.pad(self.x_in_tmp,(jitter_range, jitter_range, jitter_range, jitter_range),'constant',value=0)

|

||||

_,_,H,W = self.x_in_tmp.shape

|

||||

x_in_ = F.pad(x_in,(jitter_range, jitter_range, jitter_range, jitter_range),'constant',value=0)

|

||||

_,_,H,W = x_in.shape

|

||||

|

||||

std_, mean_ = self.x_in_tmp.std(), self.x_in_tmp.mean()

|

||||

c3 = 0.99 * self.cosine_factor ** self.p.cosine_scale_3 + 1e-2

|

||||

latents_gaussian = self.gaussian_filter(self.x_in_tmp, kernel_size=(2*self.p.current_scale_num-1), sigma=0.8*c3)

|

||||

self.latents_gaussian = (latents_gaussian - latents_gaussian.mean()) / latents_gaussian.std() * std_ + mean_

|

||||

self.jitter_range = jitter_range

|

||||

self.sampler.model_wrap_cfg.inner_model.forward = self.sample_one_step_local

|

||||

self.sampler.model_wrap_cfg.inner_model.forward = self.sample_one_step

|

||||

self.repeat_3 = False

|

||||

x_local = self.sampler.model_wrap_cfg.forward_ori(self.x_in_tmp_,sigma, **kwarg)

|

||||

|

||||

x_out = self.sampler.model_wrap_cfg.forward_ori(x_in_,sigma, **kwarg)

|

||||

self.sampler.model_wrap_cfg.inner_model.forward = self.sampler_forward

|

||||

x_local = x_local[:,:,jitter_range:jitter_range+H,jitter_range:jitter_range+W]

|

||||

x_out = x_out[:,:,jitter_range:jitter_range+H,jitter_range:jitter_range+W]

|

||||

|

||||

############################################# Dilated Sampling #############################################

|

||||

if not hasattr(self.p.sd_model, 'apply_model_ori'):

|

||||

self.p.sd_model.apply_model_ori = self.p.sd_model.apply_model

|

||||

self.p.sd_model.apply_model = self.apply_model_hijack

|

||||

x_global = torch.zeros_like(x_local)

|

||||

|

||||

for batch_id, bboxes in enumerate(self.global_batched_bboxes):

|

||||

for bbox in bboxes:

|

||||

w,h = bbox

|

||||

|

||||

######

|

||||

|

||||

x_global_i = self.sampler.model_wrap_cfg.forward_ori(self.x_in_tmp[:,:,h::self.p.current_scale_num,w::self.p.current_scale_num],sigma, **kwarg) # x_in_tmp could be changed to latents_gaussian

|

||||

x_global[:,:,h::self.p.current_scale_num,w::self.p.current_scale_num] += x_global_i

|

||||

|

||||

######

|

||||

|

||||

#NOTE: Predicting Noise on Gaussian Latent and Obtaining Denoised on Original Latent

|

||||

|

||||

# self.x_out_list = []

|

||||

# self.x_out_idx = -1

|

||||

# self.flag = 1

|

||||

# self.sampler.model_wrap_cfg.forward_ori(self.latents_gaussian[:,:,h::self.p.current_scale_num,w::self.p.current_scale_num],sigma,**kwarg)

|

||||

# self.flag = 0

|

||||

# x_global_i = self.sampler.model_wrap_cfg.forward_ori(self.x_in_tmp[:,:,h::self.p.current_scale_num,w::self.p.current_scale_num],sigma,**kwarg)

|

||||

# x_global[:,:,h::self.p.current_scale_num,w::self.p.current_scale_num] += x_global_i

|

||||

|

||||

self.p.sd_model.apply_model = self.p.sd_model.apply_model_ori

|

||||

|

||||

x_out= x_local*(1-c2)+ x_global*c2

|

||||

return x_out

|

||||

|

||||

|

||||

@torch.no_grad()

|

||||

@keep_signature

|

||||

def sample_one_step_local(self, x_in, sigma, cond):

|

||||

def sample_one_step(self, x_in, sigma, cond):

|

||||

assert LatentDiffusion.apply_model

|

||||

def repeat_func_1(x_tile:Tensor, bboxes:List[CustomBBox]) -> Tensor:

|

||||

sigma_tile = self.repeat_tensor(sigma, len(bboxes))

|

||||

|

|

@ -287,11 +240,10 @@ class DemoFusion(AbstractDiffusion):

|

|||

repeat_func = repeat_func_2

|

||||

N,_,_,_ = x_in.shape

|

||||

|

||||

H = self.h

|

||||

W = self.w

|

||||

|

||||

self.x_buffer = torch.zeros_like(x_in)

|

||||

self.weights = torch.zeros_like(x_in)

|

||||

|

||||

for batch_id, bboxes in enumerate(self.batched_bboxes):

|

||||

if state.interrupted: return x_in

|

||||

x_tile = torch.cat([x_in[bbox.slicer] for bbox in bboxes], dim=0)

|

||||

|

|

@ -302,9 +254,44 @@ class DemoFusion(AbstractDiffusion):

|

|||

self.weights[bbox.slicer] += 1

|

||||

self.weights = torch.where(self.weights == 0, torch.tensor(1), self.weights) #Prevent NaN from appearing in random_jitter mode

|

||||

|

||||

x_buffer = self.x_buffer/self.weights

|

||||

x_local = self.x_buffer/self.weights

|

||||

|

||||

return x_buffer

|

||||

self.x_buffer = torch.zeros_like(self.x_buffer)

|

||||

self.weights = torch.zeros_like(self.weights)

|

||||

|

||||

std_, mean_ = x_in.std(), x_in.mean()

|

||||

c3 = 0.99 * self.cosine_factor ** self.p.cosine_scale_3 + 1e-2

|

||||

if self.p.gaussian_filter:

|

||||

x_in_g = self.gaussian_filter(x_in, kernel_size=(2*self.p.current_scale_num-1), sigma=self.sig*c3)

|

||||

x_in_g = (x_in_g - x_in_g.mean()) / x_in_g.std() * std_ + mean_

|

||||

|

||||

if not hasattr(self.p.sd_model, 'apply_model_ori'):

|

||||

self.p.sd_model.apply_model_ori = self.p.sd_model.apply_model

|

||||

self.p.sd_model.apply_model = self.apply_model_hijack

|

||||

x_global = torch.zeros_like(x_local)

|

||||

jitter_range = self.jitter_range

|

||||

end = x_global.shape[3]-jitter_range

|

||||

|

||||

for batch_id, bboxes in enumerate(self.global_batched_bboxes):

|

||||

for bbox in bboxes:

|

||||

w,h = bbox

|

||||

# self.x_out_list = []

|

||||

# self.x_out_idx = -1

|

||||

# self.flag = 1

|

||||

x_global_i0 = self.sampler_forward(x_in_g[:,:,h+jitter_range:end:self.p.current_scale_num,w+jitter_range:end:self.p.current_scale_num],sigma,cond = cond)

|

||||

# self.flag = 0

|

||||

x_global_i1 = self.sampler_forward(x_in[:,:,h+jitter_range:end:self.p.current_scale_num,w+jitter_range:end:self.p.current_scale_num],sigma,cond = cond) #NOTE According to the original execution process, it would be very strange to use the predicted noise of gaussian latents to predict the denoised data in non Gaussian latents. Why?

|

||||

self.x_buffer[:,:,h+jitter_range:end:self.p.current_scale_num,w+jitter_range:end:self.p.current_scale_num] += (x_global_i0 + x_global_i1)/2

|

||||

self.weights[:,:,h+jitter_range:end:self.p.current_scale_num,w+jitter_range:end:self.p.current_scale_num] += 1

|

||||

|

||||

self.p.sd_model.apply_model = self.p.sd_model.apply_model_ori

|

||||

self.weights = torch.where(self.weights == 0, torch.tensor(1), self.weights) #Prevent NaN from appearing in random_jitter mode

|

||||

|

||||

x_global = self.x_buffer/self.weights

|

||||

c2 = self.cosine_factor**self.p.cosine_scale_2

|

||||

self.x_buffer= x_local*(1-c2)+ x_global*c2

|

||||

|

||||

return self.x_buffer

|

||||

|

||||

|

||||

|

||||

|

|

@ -315,7 +302,7 @@ class DemoFusion(AbstractDiffusion):

|

|||

|

||||

x_tile_out = self.p.sd_model.apply_model_ori(x_in,t_in,cond)

|

||||

return x_tile_out

|

||||

#NOTE: Using Gaussian Latent to Predict Noise on the Original Latent

|

||||

# NOTE: Using Gaussian Latent to Predict Noise on the Original Latent

|

||||

# if self.flag == 1:

|

||||

# x_tile_out = self.p.sd_model.apply_model_ori(x_in,t_in,cond)

|

||||

# self.x_out_list.append(x_tile_out)

|

||||

|

|

|

|||

|

|

@ -36,7 +36,7 @@ class MultiDiffusion(AbstractDiffusion):

|

|||

|

||||

def reset_buffer(self, x_in:Tensor):

|

||||

super().reset_buffer(x_in)

|

||||

|

||||

|

||||

@custom_bbox

|

||||

def init_custom_bbox(self, *args):

|

||||

super().init_custom_bbox(*args)

|

||||

|

|

@ -229,7 +229,7 @@ class MultiDiffusion(AbstractDiffusion):

|

|||

cond_out = self.repeat_cond_dict(cond_in_original, bboxes)

|

||||

x_tile_out = shared.sd_model.apply_model(x_tile, sigma_in_tile, cond=cond_out)

|

||||

return x_tile_out

|

||||

|

||||

|

||||

def custom_func(x:Tensor, bbox_id:int, bbox:CustomBBox):

|

||||

# The negative prompt in custom bbox should not be used for noise inversion

|

||||

# otherwise the result will be astonishingly bad.

|

||||

|

|

|

|||

|

|

@ -23,7 +23,7 @@ class ComparableEnum(Enum):

|

|||

def __eq__(self, other: Any) -> bool:

|

||||

if isinstance(other, str): return self.value == other

|

||||

elif isinstance(other, ComparableEnum): return self.value == other.value

|

||||

else: raise TypeError(f'unsupported type: {type(other)}')

|

||||

else: raise TypeError(f'unsupported type: {type(other)}')

|

||||

|

||||

class Method(ComparableEnum):

|

||||

|

||||

|

|

|

|||

Loading…

Reference in New Issue